6 Statistical models

6.1 Simple linear regression

Simple linear regression is the most common “first model” in applied statistics. It answers a basic question:

How does the expected value of an outcome \(Y\) change as a function of a single predictor \(X\)?

Even if your final analysis uses more sophisticated methods (mixed models, GLMs, survival models), simple linear regression remains a key building block. It teaches you how estimation works, why assumptions matter, and how uncertainty is quantified through standard errors, confidence intervals, and hypothesis tests.

In practice, regression has two equally important goals:

1) Explanation (estimating association or effect size), and

2) Prediction (forecasting outcomes at new values of \(X\)).

6.1.1 Linear regeression assumptions

A regression model is only as reliable as its assumptions. In real-world analysis, the assumptions are rarely perfect, but they guide diagnostics and help you understand when inference may break down.

There are four principal assumptions:

Linearity of the relationship between dependent and independent variables.

This means the conditional mean \(E(Y|X)\) is well-approximated by a straight line. If the true relationship is curved, the linear model may still be useful as a local approximation, but interpretation can become misleading.Statistical independence of the errors with \(y\) variable.

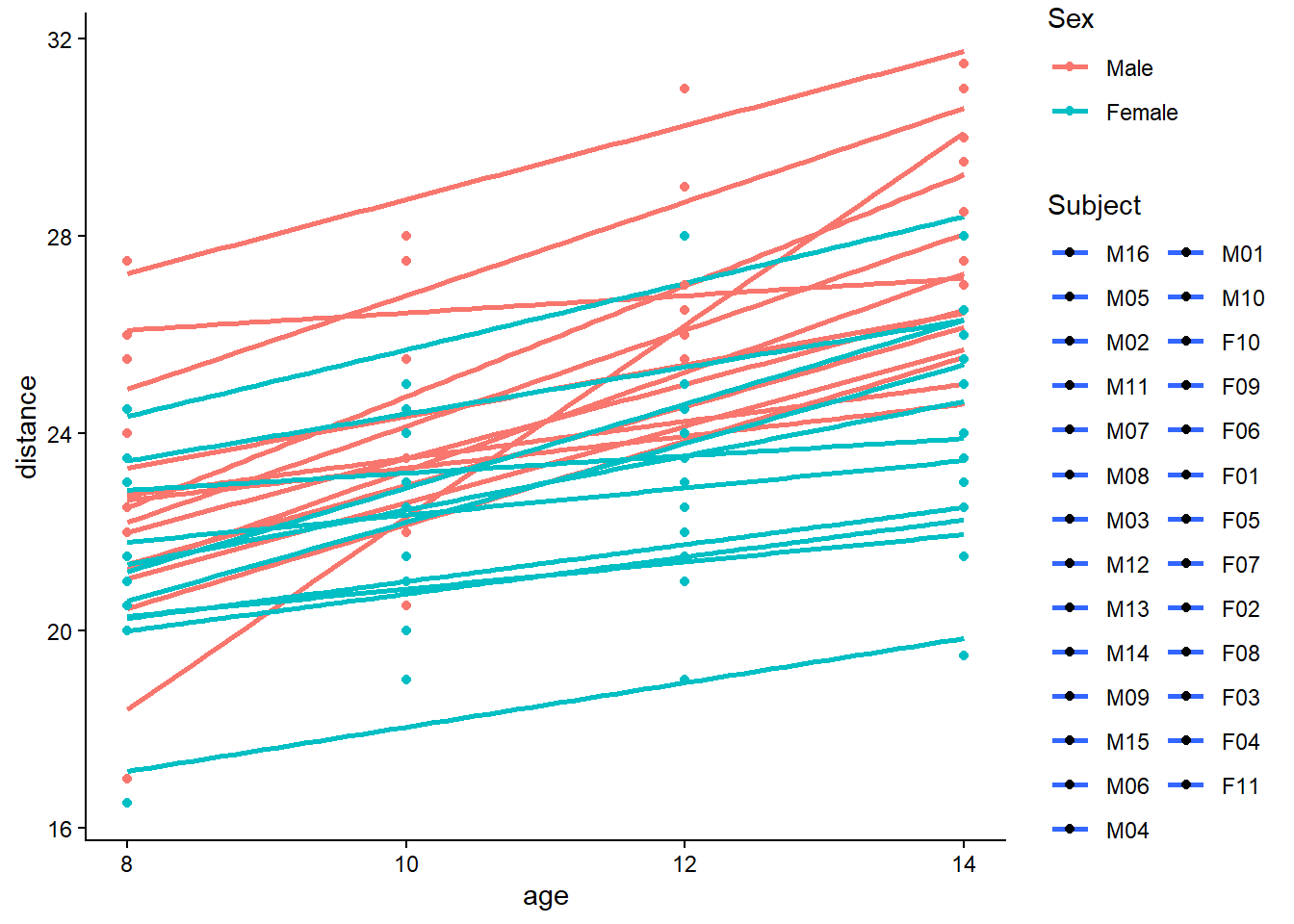

Independence is often violated in longitudinal data, clustered data (e.g., patients within sites), or time series. When independence fails, standard errors are typically wrong—often too small, leading to overly optimistic p-values and confidence intervals that are too narrow.Homoscedasticity (constant variance) of the errors for all \(x\).

If variability increases with \(X\) (a “fanning out” pattern), the model may still estimate the mean trend reasonably, but standard errors and tests can be distorted unless you use robust methods or transform variables.Normality of the error distribution.

Normality matters mainly for small-sample inference. Large samples rely less on strict normality due to asymptotic approximations. Also note: normality is assumed for the errors, not necessarily for \(X\) or \(Y\) marginally.

if independent assumption violated, the estimated standard errors tend to underestimate the true standard error. P value associated thus is lower.

only the prediction errors need to be normally distributed. but with extremely asymmetric or long-tailed, it may be hard to fit them (x and y) into a linear model whose errors will be normally distributed.

6.1.2 Population regression function

The population regression function is the ideal target we would like to know: the true conditional mean of \(Y\) given \(X\). Regression is fundamentally about modeling and estimating this conditional expectation.

Regression is to estimate and/or predict the population mean (expectation) of dependent variable (yi) by a known or a set value of explanatory variables (xi). Population regression line (PRL) is the trajectory of the conditional expectation value given Xi.

\[ E(Y|X_i)=f(X_i)=\beta_1+\beta_2X_i \]

This is an unknown but fixed value (can be estimated).

A key interpretation: - \(\beta_1\) is the expected value of \(Y\) when \(X=0\) (sometimes meaningful, sometimes not). - \(\beta_2\) is the expected change in \(Y\) for a one-unit increase in \(X\).

6.1.3 Population regression model

In the population, actual observations deviate from the regression function due to randomness and unmeasured factors. We represent this deviation as an error term \(u_i\).

\[ Y_i=\beta_1+\beta_2X_i+u_i \]

the errors \(u_i=y_i-\hat{y}_i\) have equal variance

In applied interpretation, \(u_i\) captures everything not explained by \(X\): measurement noise, omitted variables, and inherent randomness.

6.1.4 Sample regression model

In practice we observe data and estimate coefficients. The sample regression model replaces unknown population parameters with estimates (hats), and uses residuals \(e_i\) as estimated errors.

(using hat to indicate sample)

\[ Y_i=\hat{\beta}_1+\hat{\beta}_2X_i+e_i \]

since

\[ u_i \sim N(0,\sigma^2) \] or \[ e_i \sim N(0,\hat{\sigma} ^2) \]

and

i.i.d., independent identically distribution, the probability distributions are all the same and variables are independent of each other.

\[ \begin{align} u_i \sim i.i.d \ N(0,\sigma^2) \end{align} \]

then

\[ \begin{align} Y_i- \beta_1+\beta_2X_i (\hat{Y_i}) &\sim i.i.d \ N(0,\sigma^2)\\ \end{align} \]

This i.i.d. assumption is what allows us to derive standard errors and perform t-tests and F-tests in the classical linear regression framework.

6.1.5 Least squares: minimize \(Q=\sum (Y_i-\hat{Y}_i)^2\)

The ordinary least squares (OLS) estimator chooses coefficients that minimize the total squared residual error. Squaring penalizes large deviations and gives a unique and mathematically convenient solution.

thence, to minimize Q \(\sum{(Y_i-\hat{Y}_i)^2}\) to solve b0 and b1.

\[ \begin{align} Min(Q) &=\sum{(Y_i-\hat{Y}_i)^2}\\ &=\sum{\left ( Y_i-(\hat{\beta}_1+\hat{\beta}_2X_i) \right )^2}\\ &=f(\hat{\beta}_1,\hat{\beta}_2) \end{align} \]

6.1.6 Solve \(\hat{\beta}_1,\hat{\beta}_2\) and variance

Once the least squares problem is solved, you get closed-form estimators for the slope and intercept. The sampling variability of these estimators depends on: - the error variance \(\sigma^2\), - the spread of \(X\) values (more spread → more information → smaller variance).

\[ \begin{align} \begin{split} \hat{\beta}_2 &=\frac{\sum{x_iy_i}}{\sum{x_i^2}}\\ \hat{\beta_1} &=\bar{Y}_i-\hat{\beta}_2\bar{X}_i \end{split} \\ var(\hat{\beta}_2) =\sigma_{\hat{\beta}_2}^2&=\frac{1}{\sum{x_i^2}}\cdot\sigma^2&&\text{} \\ var(\hat{\beta}_1) =\sigma_{\hat{\beta}_1}^2 &=\frac{\sum{X_i^2}}{n\sum{x_i^2}}\cdot\sigma^2 \end{align} \]

A practical takeaway: if your \(X\) values are tightly clustered, \(\sum x_i^2\) is small and the slope becomes hard to estimate precisely.

6.1.7 Calculate the variance \(\hat{\sigma}^2\) of error \(e_i\)

The residual variance is estimated from the residual sum of squares. Conceptually, it measures how much unexplained variability remains after fitting the regression line.

(for sample)

\[ \begin{align} \hat{Y}_i &=\hat{\beta}_1+\hat{\beta}_2X_i \\ e_i &=Y_i-\hat{Y}_i \\ \hat{\sigma}^2 &=\frac{\sum{e_i^2}}{n-1}=\frac{\sum{(Y_i-\hat{Y}_i)^2}}{n-1} \end{align} \]

(Practical note: in classical regression, the unbiased estimator typically uses \(n-2\) in the denominator for simple linear regression because two parameters were estimated. Your expression shows the core idea—estimating variance from squared residuals.)

6.1.8 Sum of squares decomposition

A central identity in regression is that total variability can be decomposed into explained and unexplained components. This is the basis of \(R^2\) and the ANOVA-style F test.

\[ \begin{align} (Y_i-\bar{Y_i}) &= (\hat{Y_i}-\bar{Y_i}) +(Y_i-\hat{Y_i}) \\ \sum{y_i^2} &= \sum{\hat{y_i}^2} +\sum{e_i^2} \\ TSS&=ESS+RSS \end{align} \]

Interpretation: - TSS: total sum of squares (overall variability around the mean) - ESS: explained sum of squares (variability explained by the regression) - RSS: residual sum of squares (unexplained variability)

6.1.9 Coefficient of determination \(R^2\) and goodness of fit

\(R^2\) is the proportion of variability explained by the model. It is descriptive: a higher \(R^2\) means the fitted line tracks the data more closely, but it does not guarantee causality or correctness of assumptions.

\[ \begin{align} r^2 &=\frac{ESS}{TSS}=\frac{\sum{(\hat{Y_i}-\bar{Y})^2}}{\sum{(Y_i-\bar{Y})^2}}\\ &=1-\frac{RSS}{TSS}=1-\frac{\sum{(Y_i-\hat{Y_i})^2}}{\sum{(Y_i-\bar{Y})^2}} \end{align} \]

In practice, always interpret \(R^2\) alongside residual diagnostics. A model can have a decent \(R^2\) but still violate key assumptions (e.g., heteroscedasticity).

6.1.10 Test of regression coefficients

Hypothesis testing in regression typically focuses on whether coefficients differ from zero (or another clinically meaningful value). Under classical assumptions, coefficients are normally distributed around their true values.

since

\[

\begin{align}

\hat{\beta_2} &\sim N(\beta_2,\sigma^2_{\hat{\beta_2}}) \\

\hat{\beta_1} &\sim N(\beta_1,\sigma^2_{\hat{\beta_1}})

\end{align}

\]

and \[ \begin{align} S_{\hat{\beta}_2} &=\sqrt{\frac{1}{\sum{x_i^2}}}\cdot\hat{\sigma} \\ S_{\hat{\beta}_1} &=\sqrt{\frac{\sum{X_i^2}}{n\sum{x_i^2}}}\cdot\hat{\sigma} \end{align} \]

therefore

\[

\begin{align}

t_{\hat{\beta_2}}^{\ast}&=\frac{\hat{\beta_2}-\beta_2}{S_{\hat{\beta_2}}}

=\frac{\hat{\beta_2}}{S_{\hat{\beta_2}}}

=\frac{\hat{\beta_2}}{\sqrt{\frac{1}{\sum{x_i^2}}}\cdot\hat{\sigma}}

\sim t(n-2)

\\

t_{\hat{\beta_1}}^{\ast}&=\frac{\hat{\beta_1}-\beta_1}{S_{\hat{\beta_1}}}

=\frac{\hat{\beta_1}}{S_{\hat{\beta_1}}}

=\frac{\hat{\beta_1}}{\sqrt{\frac{\sum{X_i^2}}{n\sum{x_i^2}}}\cdot\hat{\sigma}}

\sim t(n-2)

\end{align}

\]

In applied reporting, the slope test is often the primary focus, because it corresponds to whether \(X\) is associated with \(Y\) in a linear trend.

6.1.11 Statistical test of model

Beyond individual coefficients, we may want to test whether the model as a whole explains a statistically significant amount of variability compared with a null model.

since \[ \begin{align} Y_i&\sim i.i.d \ N(\beta_1+\beta_2X_i,\sigma^2)\\ \end{align} \]

and

\[

\begin{align}

ESS&=\sum{(\hat{Y_i}-\bar{Y})^2} \sim \chi^2(df_{ESS}) \\

RSS&=\sum{(Y_i-\hat{Y_i})^2} \sim \chi^2(df_{RSS})

\end{align}

\]

therefore \[ \begin{align} F^{\ast}&=\frac{ESS/df_{ESS}}{RSS/df_{RSS}}=\frac{MSS_{ESS}}{MSS_{RSS}}\\ &=\frac{\sum{(\hat{Y_i}-\bar{Y})^2}/df_{ESS}}{\sum{(Y_i-\hat{Y_i})^2}/df_{RSS}} \\ &=\frac{\hat{\beta_2}^2\sum{x_i^2}}{\sum{e_i^2}/{(n-2)}}\\ &=\frac{\hat{\beta_2}^2\sum{x_i^2}}{\hat{\sigma}^2} \end{align} \]

The F test evaluates whether the explained variation (ESS) is large relative to residual variation (RSS), after accounting for degrees of freedom.

6.1.12 Mean prediction

Prediction in regression has two common targets:

- Mean prediction: the expected outcome for individuals with a given \(X_0\).

- Individual prediction: the outcome for a new single individual with \(X_0\).

Mean prediction is more precise because it estimates an average, not a single future value.

since

\[ \begin{align} \mu_{\hat{Y}_0}&=E(\hat{Y}_0)\\ &=E(\hat{\beta}_1+\hat{\beta}_2X_0)\\ &=\beta_1+\beta_2X_0\\ &=E(Y|X_0) \end{align} \]

and \[ \begin{align} var(\hat{Y}_0)&=\sigma^2_{\hat{Y}_0}\\ &=E(\hat{\beta}_1+\hat{\beta}_2X_0)\\ &=\sigma^2 \left( \frac{1}{n}+ \frac{(X_0-\bar{X})^2}{\sum{x_i^2}} \right) \end{align} \]

therefore \[ \begin{align} \hat{Y}_0& \sim N(\mu_{\hat{Y}_0},\sigma^2_{\hat{Y}_0})\\ \hat{Y}_0& \sim N \left(E(Y|X_0), \sigma^2 \left( \frac{1}{n}+ \frac{(X_0-\bar{X})^2}{\sum{x_i^2}} \right) \right) \end{align} \]

then construct t statistic to estimate CI

\[

\begin{align}

t_{\hat{Y}_0}& =\frac{\hat{Y}_0-E(Y|X_0)}{S_{\hat{Y}_0}} \sim t(n-2)

\end{align}

\]

\[ \begin{align} \hat{Y}_0-t_{1-\alpha/2}(n-2) \cdot S_{\hat{Y}_0} \leq E(Y|X_0) \leq \hat{Y}_0+t_{1-\alpha/2}(n-2) \cdot S_{\hat{Y}_0} \end{align} \]

Interpretation: the CI here is for the mean response at \(X_0\). It is narrow when: - \(n\) is large, and - \(X_0\) is close to \(\bar{X}\) (more information near the center of the data).

6.1.13 Individual prediction

Individual prediction intervals are wider because they must account for: - uncertainty in estimating the mean trend, and - the irreducible random error for a new observation.

since

\[ \begin{align} (Y_0-\hat{Y}_0)& \sim N \left(\mu_{(Y_0-\hat{Y}_0)},\sigma^2_{(Y_0-\hat{Y}_0)} \right)\\ (Y_0-\hat{Y}_0)& \sim N \left(0, \sigma^2 \left(1+ \frac{1}{n}+ \frac{(X_0-\bar{X})^2}{\sum{x_i^2}} \right) \right) \end{align} \]

and Construct t statistic

\[

\begin{align}

t_{\hat{Y}_0}& =\frac{\hat{Y}_0-E(Y|X_0)}{S_{\hat{Y}_0}} \sim t(n-2)

\end{align}

\]

and \[ \begin{align} S_{\hat{Y}_0}& = \sqrt{\hat{\sigma}^2 \left( \frac{1}{n}+ \frac{(X_0-\bar{X})^2}{\sum{x_i^2}} \right)} \end{align} \]

therefore

\[ \begin{align} \hat{Y}_0-t_{1-\alpha/2}(n-2) \cdot S_{\hat{Y}_0} \leq E(Y|X_0) \leq \hat{Y}_0+t_{1-\alpha/2}(n-2) \cdot S_{\hat{Y}_0} \end{align} \]

it is harder to predict your weight based on your age than to predict the mean weight of people who are your age. so, the interval of individual prediction is wider than those of mean prediction.

A practical mental model: - Mean CI answers: “What is the expected mean outcome at \(X_0\)?” - Prediction interval answers: “Where might a new individual outcome fall at \(X_0\)?”

6.2 Multiple linear regression

Multiple linear regression generalizes the simple model by allowing multiple predictors. The key shift is that each coefficient is interpreted as an effect holding other variables constant.

6.2.1 Matrix format

\[ \begin{align} Y_i&=\beta_1+\beta_2X_{2i}+\beta_3X_{3i}+\cdots+\beta_kX_{ki}+u_i && \ \end{align} \]

\[ \begin{equation} \begin{bmatrix} Y_1 \\ Y_2 \\ \cdots \\ Y_n \\ \end{bmatrix} = \begin{bmatrix} 1 & X_{21} & X_{31} & \cdots & X_{k1} \\ 1 & X_{22} & X_{32} & \cdots & X_{k2} \\ \cdots & \cdots & \cdots & \cdots & \cdots \\ 1 & X_{2n} & X_{3n} & \cdots & X_{kn} \end{bmatrix} \begin{bmatrix} \beta_1 \\ \beta_2 \\ \vdots \\ \beta_k \\ \end{bmatrix}+ \begin{bmatrix} u_1 \\ u_2 \\ \vdots \\ u_n \\ \end{bmatrix} \end{equation} \]

\[ \begin{alignat}{4} \mathbf{y} &= &\mathbf{X}&\mathbf{\beta}&+&\mathbf{u} \\ (n \times 1) & &{(n \times k)} &{(k \times 1)}&+&{(n \times 1)} \end{alignat} \]

The matrix form is not just notation—it simplifies derivations and makes the estimator compact and general.

6.2.2 Variance covariance matrix of random errors

The classical assumption is that errors are independent, have equal variance, and have zero covariance. This leads to a diagonal variance-covariance structure proportional to the identity matrix.

because \[ \mathbf{u} \sim N(\mathbf{0},\sigma^2\mathbf{I})\text{ population}\\ \mathbf{e} \sim N(\mathbf{0},\sigma^2\mathbf{I})\text{ sample}\ \]

therefore \[ \begin{align} var-cov(\mathbf{u})&=E(\mathbf{uu'})\\ &= \begin{bmatrix} \sigma_1^2 & \sigma_{12}^2 &\cdots &\sigma_{1n}^2\\ \sigma_{21}^2 & \sigma_2^2 &\cdots &\sigma_{2n}^2\\ \vdots & \vdots &\vdots &\vdots \\ \sigma_{n1}^2 & \sigma_{n2}^2 &\cdots &\sigma_n^2\\ \end{bmatrix} && \leftarrow (E{(u_i)}=0)\\ &= \begin{bmatrix} \sigma^2 & \sigma_{12}^2 &\cdots &\sigma_{1n}^2\\ \sigma_{21}^2 & \sigma^2 &\cdots &\sigma_{2n}^2\\ \vdots & \vdots &\vdots &\vdots \\ \sigma_{n1}^2 & \sigma_{n2}^2 &\cdots &\sigma^2\\ \end{bmatrix} && \leftarrow (var{(u_i)}=\sigma^2)\\ &= \begin{bmatrix} \sigma^2 & 0 &\cdots &0\\ 0 & \sigma^2 &\cdots &0\\ \vdots & \vdots &\vdots &\vdots \\ 0 & 0 &\cdots &\sigma^2\\ \end{bmatrix} && \leftarrow (cov{(u_i,u_j)}=0,i \neq j)\\ &=\sigma^2 \begin{bmatrix} 1 & 0 &\cdots &0\\ 0 & 1 &\cdots &0\\ \vdots & \vdots &\vdots &\vdots \\ 0 & 0 &\cdots &1\\ \end{bmatrix}\\ &=\sigma^2\mathbf{I} \end{align} \]

In applied work, this assumption is often challenged by clustering and repeated measures. When violated, analysts may move to robust standard errors, GLS, or mixed effects models.

6.2.3 Minimize \(Q=\sum (y-\hat{y})^2\)

In matrix form, OLS still minimizes the squared residuals, but the algebra becomes compact and scalable.

\[ \begin{align} Q&=\sum{e_i^2}\\ &=\mathbf{e'e}\\ &=\mathbf{(y-X\hat{\beta})'(y-X\hat{\beta})}\\ &=\mathbf{y'y-2\hat{\beta}'X'y+\hat{\beta}'X'X\hat{\beta}} \end{align} \]

6.2.4 Solve \(\hat{\beta}\) by derivation

Setting the derivative with respect to \(\hat{\beta}\) equal to zero yields the normal equations. Solving them gives the closed-form OLS estimator.

(population=sample)

\[ \begin{align} \frac{\partial Q}{\partial \mathbf{\hat{\beta}}}&=0\\ \frac{\partial(\mathbf{y'y-2\hat{\beta}'X'y+\hat{\beta}'X'X\hat{\beta}})}{\partial \mathbf{\hat{\beta}}}&=0\\ -2\mathbf{X'y}+2\mathbf{X'X\hat{\beta}}&=0\\ -\mathbf{X'y}+\mathbf{X'X\hat{\beta}}&=0\\ \mathbf{X'X\hat{\beta}} &=\mathbf{X'y} \end{align} \]

\[ \begin{align} \mathbf{\hat{\beta}} &=\mathbf{(X'X)^{-1}X'y} \end{align} \]

Interpretation: \((X'X)^{-1}\) reflects the information in the design matrix. When predictors are highly correlated (multicollinearity), \(X'X\) becomes nearly singular and coefficient estimates become unstable.

6.2.5 Solve \(var\text{-}cov(\mathbf{\hat{\beta}})\)

The variance-covariance matrix of \(\hat{\beta}\) is the core object for inference in multiple regression. It contains: - variances of each coefficient (diagonal), - covariances between coefficients (off-diagonal).

\[ \begin{align} var-cov(\mathbf{\hat{\beta}}) &=\mathbf{E\left( \left(\hat{\beta}-E(\hat{\beta}) \right) \left( \hat{\beta}-E(\hat{\beta}) \right )' \right)}\\ &=\mathbf{E\left( \left(\hat{\beta}-{\beta} \right) \left( \hat{\beta}-\beta \right )' \right)} \\ &=\mathbf{E\left( \left((X'X)^{-1}X'u \right) \left( (X'X)^{-1}X'u \right )' \right)} \\ &=\mathbf{E\left( (X'X)^{-1}X'uu'X(X'X)^{-1} \right)} \\ &= \mathbf{(X'X)^{-1}X'E(uu')X(X'X)^{-1}} \\ &= \mathbf{(X'X)^{-1}X'}\sigma^2\mathbf{IX(X'X)^{-1}} \\ &= \sigma^2\mathbf{(X'X)^{-1}X'X(X'X)^{-1}} \\ &= \sigma^2\mathbf{(X'X)^{-1}} \\ \end{align} \]

6.2.6 Solve \(S^2(\mathbf{\hat{\beta}})\) (sample)

In practice, \(\sigma^2\) is unknown. We estimate it from residuals and then plug it into the variance-covariance formula.

where \[ \begin{align} \hat{\sigma}^2&=\frac{\sum{e_i^2}}{n-k}=\frac{\mathbf{e'e}}{n-k} \\ E(\hat{\sigma}^2)&=\sigma^2 \end{align} \]

therefore \[ \begin{align} S^2_{ij}(\mathbf{\hat{\beta}}) &= \hat{\sigma}^2\mathbf{(X'X)^{-1}} \\ &= \frac{\mathbf{e'e}}{n-k}\mathbf{(X'X)^{-1}} \\ \end{align} \]

which is variance-covariance of coefficients

6.2.7 Sum of squares decomposition (matrix format)

The same TSS/ESS/RSS decomposition generalizes to multiple regression, forming the basis of \(R^2\) and the overall F test.

\[ \begin{align} TSS&=\mathbf{y'y}-n\bar{Y}^2 \\ RSS&=\mathbf{ee'}=\mathbf{yy'-\hat{\beta}'X'y} \\ ESS&=\mathbf{\hat{\beta}'X'y}-n\bar{Y}^2 \end{align} \]

6.2.8 Determination coefficient \(R^2\) and goodness of fit

\[ \begin{align} R^2&=\frac{ESS}{TSS}\\ &=\frac{\mathbf{\hat{\beta}'X'y}-n\bar{Y}^2}{\mathbf{y'y}-n\bar{Y}^2} \end{align} \]

Interpretation remains the same: \(R^2\) is descriptive goodness-of-fit. In multiple regression it almost always increases as you add predictors, which is why adjusted \(R^2\) or out-of-sample validation is often preferred for model selection.

6.2.9 Test of regression coefficients

Multiple regression inference typically includes: - individual coefficient tests (is \(\beta_j=0\)?), - joint tests (are multiple coefficients simultaneously zero?).

because \[ \begin{align} \mathbf{u}&\sim N(\mathbf{0},\sigma^2\mathbf{I}) \\ \mathbf{\hat{\beta}} &\sim N\left(\mathbf{\beta},\sigma^2\mathbf{X'X}^{-1} \right) \\ \end{align} \]

therefore

(for all coefficients test, vector, see above \(S_{\hat{\beta}}^2\) )

\[

\begin{align}

\mathbf{t_{\hat{\beta}}}&=\mathbf{\frac{\hat{\beta}-\beta}{S_{\hat{\beta}}}}

\sim \mathbf{t(n-k)}

\end{align}

\]

(for individual coefficient test)

\[

\begin{align}

\mathbf{t_{\hat{\beta}}^{\ast}}&=\frac{\mathbf{\hat{\beta}}}{\mathbf{\sqrt{S^2_{ij}(\hat{\beta}_{kk})}}}

\end{align}

\]

where \[ S^2_{ij}(\hat{\beta}_{kk})=[s^2_{\hat{\beta}_1},s^2_{\hat{\beta}_2},\cdots,s^2_{\hat{\beta}_k}]' \]

they are on diagonal line of the matrix of \(S^2(\mathbf{\hat{\beta}})\)

6.2.10 Test of model

The overall model test compares: - an unrestricted model with predictors, versus - a restricted model (often intercept-only).

unrestricted model \[ \begin{align} u_i &\sim i.i.d \ N(0,\sigma^2)\\ Y_i&\sim i.i.d \ N(\beta_1+\beta_2X_i+\cdots+\beta_kX_i,\sigma^2)\\ RSS_U&=\sum{(Y_i-\hat{Y_i})^2} \sim \chi^2(n-k) \\ \end{align} \]

restricted model \[ \begin{align} u_i &\sim i.i.d \ N(0,\sigma^2)\\ Y_i&\sim i.i.d \ N(\beta_1,\sigma^2)\\ RSS_R&=\sum{(Y_i-\hat{Y_i})^2} \sim \chi^2(n-1) \\ \end{align} \]

F test \[ \begin{align} F^{\ast}&=\frac{(RSS_R-RSS_U)/(k-1)}{RSS_U/(n-k)} \\ &=\frac{ESS_U/df_{ESS_U}}{RSS_U/df_{RSS_U}} \\ &\sim F(df_{ESS_U},df_{RSS_U}) \end{align} \]

\[ \begin{align} F^{\ast}&=\frac{ESS_U/df_{ESS_U}}{RSS_U/df_{RSS_U}} =\frac{\left(\mathbf{\hat{\beta}'X'y}-n\bar{Y}^2 \right)/{(k-1)}}{\left(\mathbf{yy'-\hat{\beta}'X'y}\right)/{(n-k)}} \end{align} \]

6.2.11 Mean prediction (multiple regression)

The mean prediction generalizes naturally: you plug in a covariate vector \(X_0\). The uncertainty depends on the leverage of \(X_0\) through \(X_0(X'X)^{-1}X_0'\).

since \[ \begin{align} E(\hat{Y}_0)&=E\mathbf{(X_0\hat{\beta})}=\mathbf{X_0\beta}=E\mathbf{(Y_0)}\\ var(\hat{Y}_0)&=E\mathbf{(X_0\hat{\beta}-X_0\beta)}^2\\ &=E\mathbf{\left( X_0(\hat{\beta}-\beta)(\hat{\beta}-\beta)'X_0' \right)}\\ &=E\mathbf{X_0\left( (\hat{\beta}-\beta)(\hat{\beta}-\beta)' \right)X_0'}\\ &=\sigma^2\mathbf{X_0\left( X'X \right)^{-1}X_0'}\\ \end{align} \]

and \[ \begin{align} \hat{Y}_0& \sim N(\mu_{\hat{Y}_0},\sigma^2_{\hat{Y}_0})\\ \hat{Y}_0& \sim N\left(E(Y_0|X_0), \sigma^2\mathbf{X_0(X'X)^{-1}X_0'}\right) \end{align} \]

construct t statistic

\[

\begin{align}

t_{\hat{Y}_0}& =\frac{\hat{Y}_0-E(Y|X_0)}{S_{\hat{Y}_0}}

&\sim t(n-k)

\end{align}

\]

therefore \[ \begin{align} \hat{Y}_0-t_{1-\alpha/2}(n-2) \cdot S_{\hat{Y}_0} \leq E(Y|X_0) \leq \hat{Y}_0+t_{1-\alpha/2}(n-2) \cdot S_{\hat{Y}_0} \end{align} \]

where \[ \begin{align} \mathbf{S_{\hat{Y}_0}} &=\sqrt{\hat{\sigma}^2X_0(X'X)^{-1}X_0'} \\ \hat{\sigma}^2&=\frac{\mathbf{ee'}}{(n-k)} \end{align} \]

6.2.12 Individual prediction (multiple regression)

Individual prediction adds the irreducible error term for a new observation, making the interval wider than the mean-response interval.

since \[ \begin{align} e_0&=Y_0-\hat{Y}_0 \end{align} \]

and \[ \begin{align} E(e_0)&=E(Y_0-\hat{Y}_0)\\ &=E(\mathbf{X_0\beta}+u_0-\mathbf{X_0\hat{\beta}})\\ &=E\left(u_0-\mathbf{X_0 (\hat{\beta}- \beta)} \right)\\ &=E\left(u_0-\mathbf{X_0 (X'X)^{-1}X'u} \right)\\ &=0 \end{align} \]

\[ \begin{align} var(e_0)&=E(Y_0-\hat{Y}_0)^2\\ &=E(e_0^2)\\ &=E\left(u_0-\mathbf{X_0 (X'X)^{-1}X'u} \right)^2\\ &=\sigma^2\left( 1+ \mathbf{X_0(X'X)^{-1}X_0'}\right) \end{align} \]

and

\[

\begin{align}

e_0& \sim N(\mu_{e_0},\sigma^2_{e_0})\\

e_0& \sim N\left(0, \sigma^2\left(1+\mathbf{X_0(X'X)^{-1}X_0'}\right)\right)

\end{align}

\]

construct a t statistic

\[

\begin{align}

t_{e_0}& =\frac{\hat{Y}_0-Y_0}{S_{e_0}}

\sim t(n-k)

\end{align}

\]

therefore \[ \begin{align} \hat{Y}_0-t_{1-\alpha/2}(n-2) \cdot S_{Y_0-\hat{Y}_0} \leq (Y_0|X_0) \leq \hat{Y}_0+t_{1-\alpha/2}(n-2) \cdot S_{Y_0-\hat{Y}_0} \end{align} \]

where \[ \begin{align} S_{Y_0-\hat{Y}_0}=S_{e_0} &=\sqrt{\hat{\sigma}^2 \left( 1+X_0(X'X)^{-1}X_0' \right) } \\ \hat{\sigma}^2&=\frac{\mathbf{ee'}}{(n-k)} \end{align} \]

A final practical takeaway: - If your goal is decision-making about the average response at a covariate profile, use mean-response inference. - If your goal is forecasting an individual outcome, expect much wider uncertainty bands, even with a well-fitted model.

6.3 Multiple linear regression practice

This section is a hands-on workflow for multiple linear regression using the classic Boston housing dataset. The goal is not only to fit a model, but to practice the full sequence that a statistician typically follows in real projects:

- understand the dataset and variable types,

- check missingness and data quality,

- explore distributions and correlations,

- consider transformations for modeling stability,

- build training/test splits for honest evaluation,

- fit models (baseline, stepwise, polynomial, interaction, robust),

- check assumptions and diagnostics,

- compare models using information criteria and cross-validation,

- interpret coefficients and relative importance,

- perform prediction and validate performance.

Even though this example is not a clinical dataset, the workflow is transferable to many applied settings.

6.3.1 Load required packages

We start by loading packages that support regression modeling, data exploration, and diagnostics:

MASS: contains the Boston dataset and robust regression (rlm)psych: descriptive summaries and EDA panelscar: regression utilities like VIF

6.3.2 Loading and describing data

We load the dataset and create a working copy. In a real analysis, it is good practice to keep a pristine “original” object and perform transformations on copies.

describe() provides a compact summary: N, mean, SD, min/max, and distribution hints for each variable. This is often more informative than simply printing the first few rows.

## data_ori

##

## 14 Variables 506 Observations

## --------------------------------------------------------------------------------

## crim

## n missing distinct Info Mean Gmd .05 .10

## 506 0 504 1 3.614 5.794 0.02791 0.03819

## .25 .50 .75 .90 .95

## 0.08204 0.25651 3.67708 10.75300 15.78915

##

## lowest : 0.00632 0.00906 0.01096 0.01301 0.01311

## highest: 45.7461 51.1358 67.9208 73.5341 88.9762

## --------------------------------------------------------------------------------

## zn

## n missing distinct Info Mean Gmd .05 .10

## 506 0 26 0.603 11.36 18.77 0.0 0.0

## .25 .50 .75 .90 .95

## 0.0 0.0 12.5 42.5 80.0

##

## lowest : 0 12.5 17.5 18 20 , highest: 82.5 85 90 95 100

## --------------------------------------------------------------------------------

## indus

## n missing distinct Info Mean Gmd .05 .10

## 506 0 76 0.982 11.14 7.705 2.18 2.91

## .25 .50 .75 .90 .95

## 5.19 9.69 18.10 19.58 21.89

##

## lowest : 0.46 0.74 1.21 1.22 1.25 , highest: 18.1 19.58 21.89 25.65 27.74

## --------------------------------------------------------------------------------

## chas

## n missing distinct Info Sum Mean Gmd

## 506 0 2 0.193 35 0.06917 0.129

##

## --------------------------------------------------------------------------------

## nox

## n missing distinct Info Mean Gmd .05 .10

## 506 0 81 1 0.5547 0.1295 0.4092 0.4270

## .25 .50 .75 .90 .95

## 0.4490 0.5380 0.6240 0.7130 0.7400

##

## lowest : 0.385 0.389 0.392 0.394 0.398, highest: 0.713 0.718 0.74 0.77 0.871

## --------------------------------------------------------------------------------

## rm

## n missing distinct Info Mean Gmd .05 .10

## 506 0 446 1 6.285 0.7515 5.314 5.594

## .25 .50 .75 .90 .95

## 5.886 6.208 6.623 7.152 7.588

##

## lowest : 3.561 3.863 4.138 4.368 4.519, highest: 8.375 8.398 8.704 8.725 8.78

## --------------------------------------------------------------------------------

## age

## n missing distinct Info Mean Gmd .05 .10

## 506 0 356 0.999 68.57 31.52 17.72 26.95

## .25 .50 .75 .90 .95

## 45.02 77.50 94.07 98.80 100.00

##

## lowest : 2.9 6 6.2 6.5 6.6 , highest: 98.8 98.9 99.1 99.3 100

## --------------------------------------------------------------------------------

## dis

## n missing distinct Info Mean Gmd .05 .10

## 506 0 412 1 3.795 2.298 1.462 1.628

## .25 .50 .75 .90 .95

## 2.100 3.207 5.188 6.817 7.828

##

## lowest : 1.1296 1.137 1.1691 1.1742 1.1781

## highest: 9.2203 9.2229 10.5857 10.7103 12.1265

## --------------------------------------------------------------------------------

## rad

## n missing distinct Info Mean Gmd

## 506 0 9 0.959 9.549 8.518

##

## Value 1 2 3 4 5 6 7 8 24

## Frequency 20 24 38 110 115 26 17 24 132

## Proportion 0.040 0.047 0.075 0.217 0.227 0.051 0.034 0.047 0.261

##

## For the frequency table, variable is rounded to the nearest 0

## --------------------------------------------------------------------------------

## tax

## n missing distinct Info Mean Gmd .05 .10

## 506 0 66 0.981 408.2 181.7 222 233

## .25 .50 .75 .90 .95

## 279 330 666 666 666

##

## lowest : 187 188 193 198 216, highest: 432 437 469 666 711

## --------------------------------------------------------------------------------

## ptratio

## n missing distinct Info Mean Gmd .05 .10

## 506 0 46 0.978 18.46 2.383 14.70 14.75

## .25 .50 .75 .90 .95

## 17.40 19.05 20.20 20.90 21.00

##

## lowest : 12.6 13 13.6 14.4 14.7, highest: 20.9 21 21.1 21.2 22

## --------------------------------------------------------------------------------

## black

## n missing distinct Info Mean Gmd .05 .10

## 506 0 357 0.986 356.7 65.5 84.59 290.27

## .25 .50 .75 .90 .95

## 375.38 391.44 396.23 396.90 396.90

##

## lowest : 0.32 2.52 2.6 3.5 3.65 , highest: 396.28 396.3 396.33 396.42 396.9

## --------------------------------------------------------------------------------

## lstat

## n missing distinct Info Mean Gmd .05 .10

## 506 0 455 1 12.65 7.881 3.708 4.680

## .25 .50 .75 .90 .95

## 6.950 11.360 16.955 23.035 26.808

##

## lowest : 1.73 1.92 1.98 2.47 2.87 , highest: 34.37 34.41 34.77 36.98 37.97

## --------------------------------------------------------------------------------

## medv

## n missing distinct Info Mean Gmd .05 .10

## 506 0 229 1 22.53 9.778 10.20 12.75

## .25 .50 .75 .90 .95

## 17.02 21.20 25.00 34.80 43.40

##

## lowest : 5 5.6 6.3 7 7.2 , highest: 46.7 48.3 48.5 48.8 50

## --------------------------------------------------------------------------------summary() is a base R quick scan: it gives min/median/mean/max for numeric variables and counts for factors. This is a standard first step to detect strange ranges or unrealistic values.

| crim | zn | indus | chas | nox | rm | age | dis | rad | tax | ptratio | black | lstat | medv | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Min. : 0.00632 | Min. : 0.00 | Min. : 0.46 | Min. :0.00000 | Min. :0.3850 | Min. :3.561 | Min. : 2.90 | Min. : 1.130 | Min. : 1.000 | Min. :187.0 | Min. :12.60 | Min. : 0.32 | Min. : 1.73 | Min. : 5.00 | |

| 1st Qu.: 0.08205 | 1st Qu.: 0.00 | 1st Qu.: 5.19 | 1st Qu.:0.00000 | 1st Qu.:0.4490 | 1st Qu.:5.886 | 1st Qu.: 45.02 | 1st Qu.: 2.100 | 1st Qu.: 4.000 | 1st Qu.:279.0 | 1st Qu.:17.40 | 1st Qu.:375.38 | 1st Qu.: 6.95 | 1st Qu.:17.02 | |

| Median : 0.25651 | Median : 0.00 | Median : 9.69 | Median :0.00000 | Median :0.5380 | Median :6.208 | Median : 77.50 | Median : 3.207 | Median : 5.000 | Median :330.0 | Median :19.05 | Median :391.44 | Median :11.36 | Median :21.20 | |

| Mean : 3.61352 | Mean : 11.36 | Mean :11.14 | Mean :0.06917 | Mean :0.5547 | Mean :6.285 | Mean : 68.57 | Mean : 3.795 | Mean : 9.549 | Mean :408.2 | Mean :18.46 | Mean :356.67 | Mean :12.65 | Mean :22.53 | |

| 3rd Qu.: 3.67708 | 3rd Qu.: 12.50 | 3rd Qu.:18.10 | 3rd Qu.:0.00000 | 3rd Qu.:0.6240 | 3rd Qu.:6.623 | 3rd Qu.: 94.08 | 3rd Qu.: 5.188 | 3rd Qu.:24.000 | 3rd Qu.:666.0 | 3rd Qu.:20.20 | 3rd Qu.:396.23 | 3rd Qu.:16.95 | 3rd Qu.:25.00 | |

| Max. :88.97620 | Max. :100.00 | Max. :27.74 | Max. :1.00000 | Max. :0.8710 | Max. :8.780 | Max. :100.00 | Max. :12.127 | Max. :24.000 | Max. :711.0 | Max. :22.00 | Max. :396.90 | Max. :37.97 | Max. :50.00 |

6.3.3 Create table 1

A “Table 1” is a standard descriptive table for reporting baseline characteristics (clinical trials, observational studies, epidemiology, etc.). Here we create a Table 1 across all variables without grouping, mainly to practice the tool and check distributions.

## Warning: package 'table1' was built under R version 4.4.3| Overall (N=506) |

|

|---|---|

| crim | |

| Mean (SD) | 3.61 (8.60) |

| Median [Min, Max] | 0.257 [0.00632, 89.0] |

| zn | |

| Mean (SD) | 11.4 (23.3) |

| Median [Min, Max] | 0 [0, 100] |

| indus | |

| Mean (SD) | 11.1 (6.86) |

| Median [Min, Max] | 9.69 [0.460, 27.7] |

| chas | |

| Mean (SD) | 0.0692 (0.254) |

| Median [Min, Max] | 0 [0, 1.00] |

| nox | |

| Mean (SD) | 0.555 (0.116) |

| Median [Min, Max] | 0.538 [0.385, 0.871] |

| rm | |

| Mean (SD) | 6.28 (0.703) |

| Median [Min, Max] | 6.21 [3.56, 8.78] |

| age | |

| Mean (SD) | 68.6 (28.1) |

| Median [Min, Max] | 77.5 [2.90, 100] |

| dis | |

| Mean (SD) | 3.80 (2.11) |

| Median [Min, Max] | 3.21 [1.13, 12.1] |

| rad | |

| Mean (SD) | 9.55 (8.71) |

| Median [Min, Max] | 5.00 [1.00, 24.0] |

| tax | |

| Mean (SD) | 408 (169) |

| Median [Min, Max] | 330 [187, 711] |

| ptratio | |

| Mean (SD) | 18.5 (2.16) |

| Median [Min, Max] | 19.1 [12.6, 22.0] |

| black | |

| Mean (SD) | 357 (91.3) |

| Median [Min, Max] | 391 [0.320, 397] |

| lstat | |

| Mean (SD) | 12.7 (7.14) |

| Median [Min, Max] | 11.4 [1.73, 38.0] |

| medv | |

| Mean (SD) | 22.5 (9.20) |

| Median [Min, Max] | 21.2 [5.00, 50.0] |

Practical note: in real reporting, Table 1 is usually stratified by a group (e.g., treatment arm), but the unstratified version is still useful as a data audit.

6.3.4 Missingness checking

Before modeling, verify whether any variables have missing values and whether patterns exist. The Boston dataset is typically complete, but this step is included because real datasets almost never are.

md.pattern() (from mice) shows missingness patterns and counts by variable.

## Warning: package 'mice' was built under R version 4.4.3## /\ /\

## { `---' }

## { O O }

## ==> V <== No need for mice. This data set is completely observed.

## \ \|/ /

## `-----'

| crim | zn | indus | chas | nox | rm | age | dis | rad | tax | ptratio | black | lstat | medv | ||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 506 | 1 | 1 | 1 | 1 | 1 | 1 | 1 | 1 | 1 | 1 | 1 | 1 | 1 | 1 | 0 |

| 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

If missingness exists, you should decide whether it is: - MCAR (completely at random), - MAR (at random conditional on observed data), - MNAR (not at random).

That decision influences the imputation strategy and the validity of downstream inference.

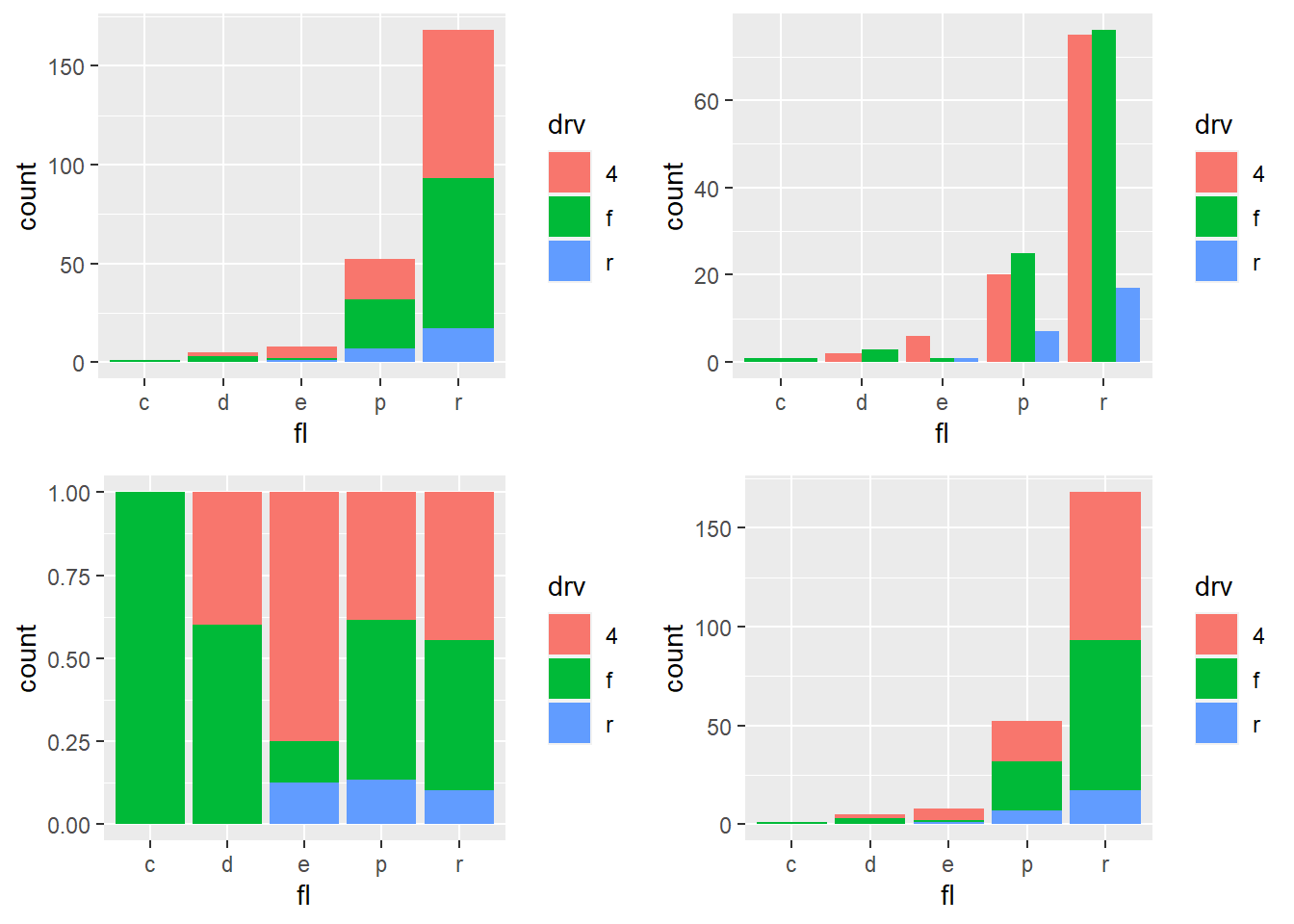

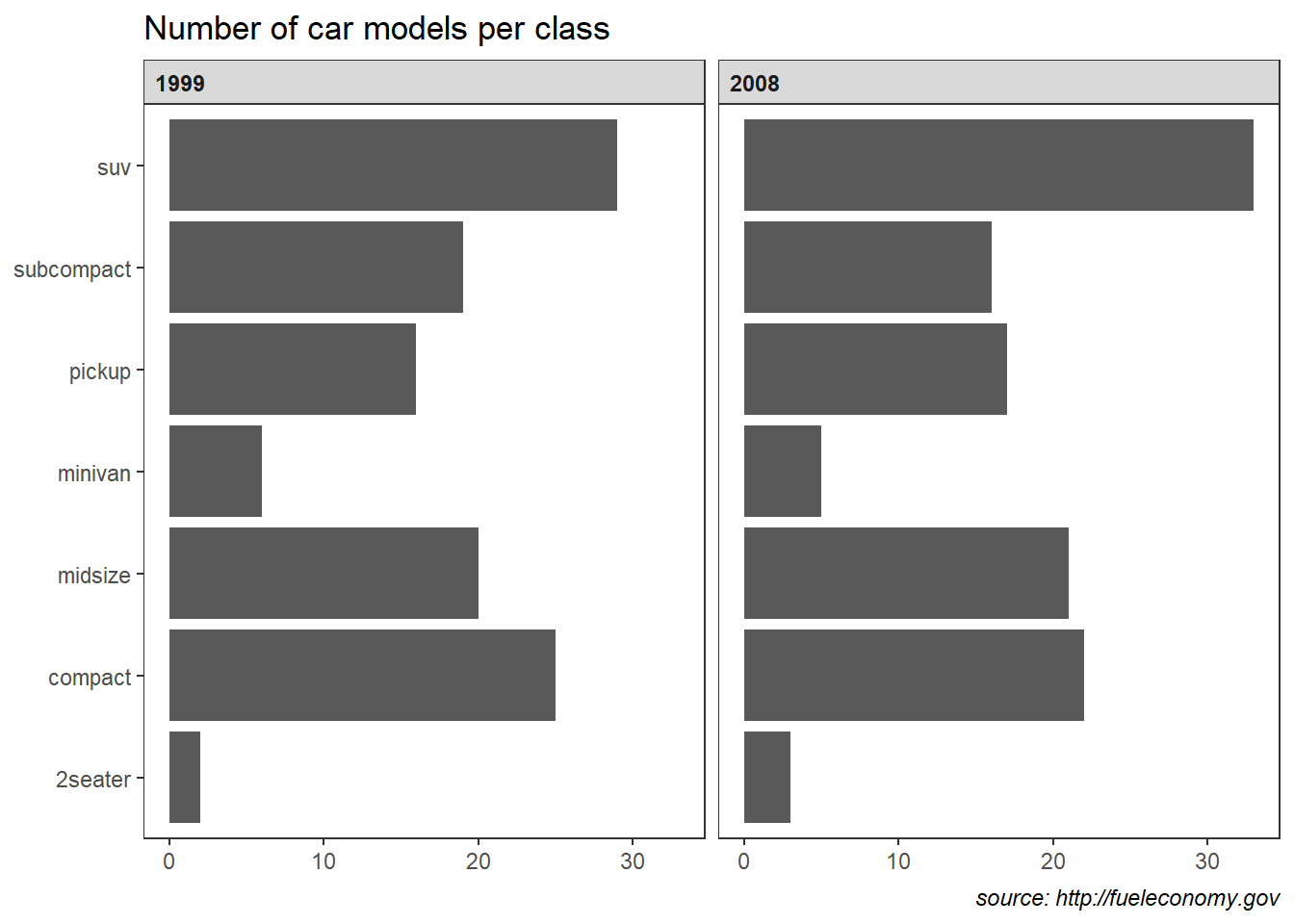

6.3.5 Exploratory data analysis

Exploratory data analysis (EDA) is where you learn the “shape” of the data: correlations, nonlinear relationships, skewness, and potential outliers.

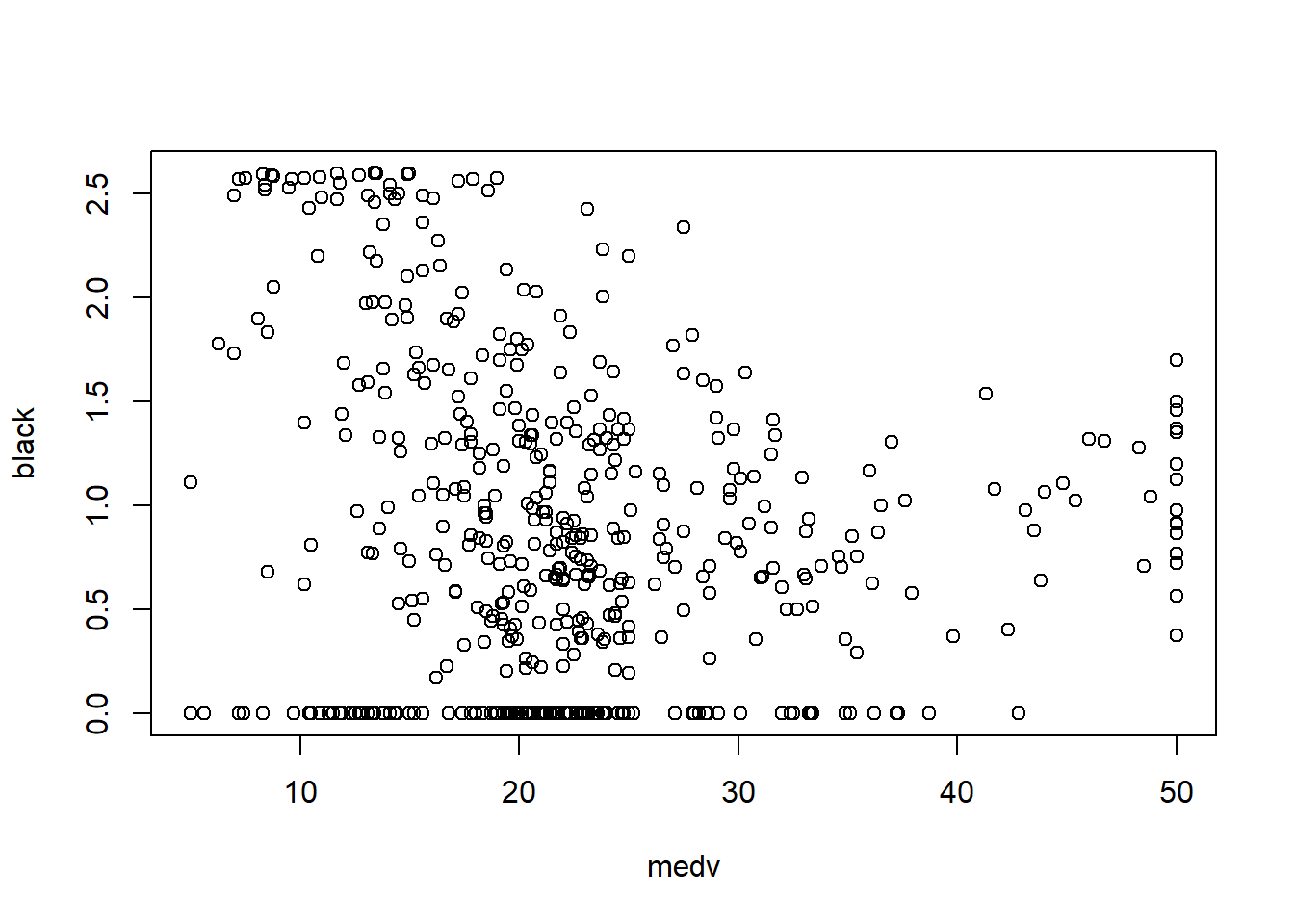

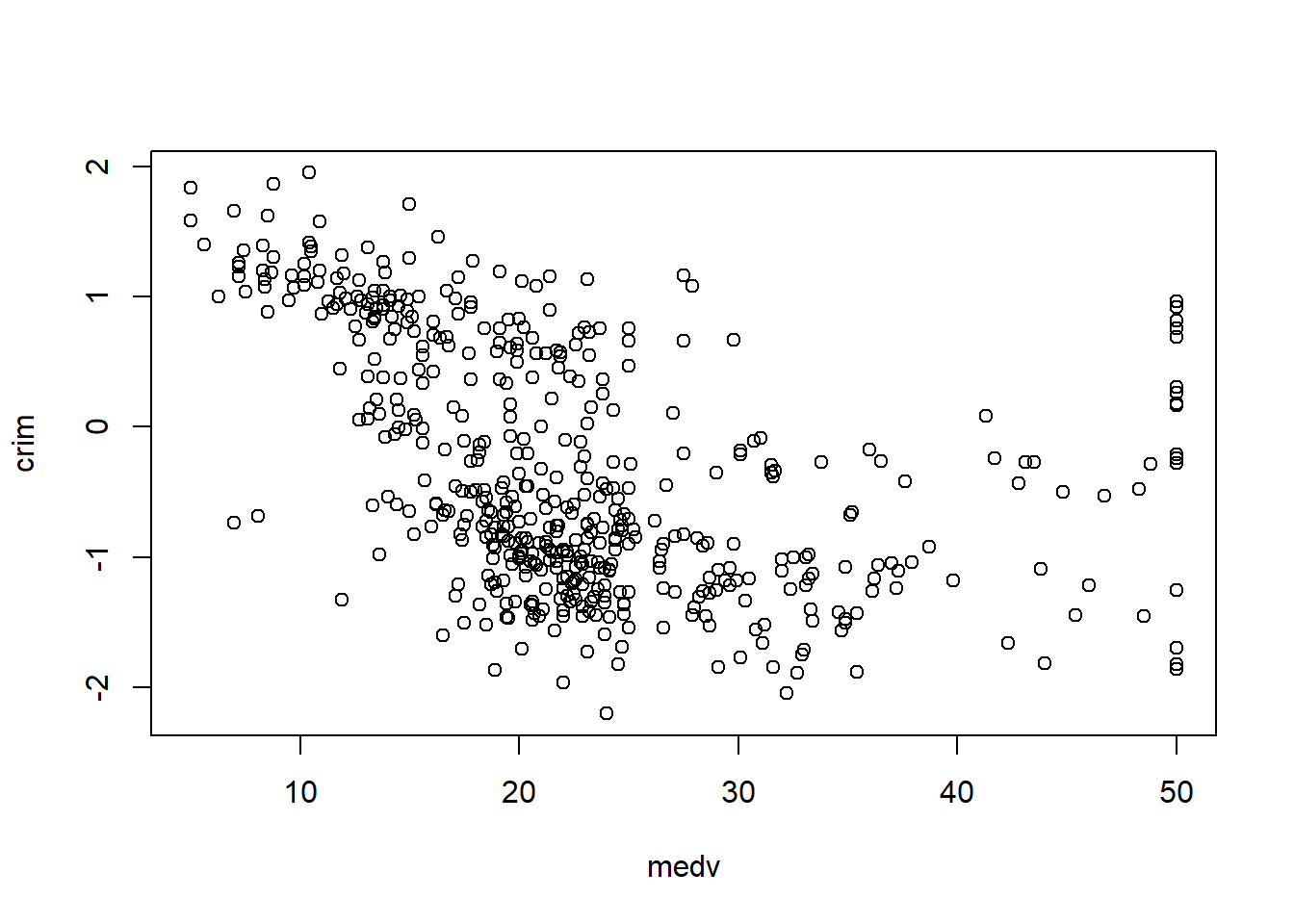

6.3.6 Transformations

Many predictors in real-world socioeconomic and biomedical data are skewed. Transformations can: - improve linearity, - stabilize variance, - reduce influence of extreme values, - make residuals closer to normal.

Here you apply a set of transformations guided by a common heuristic:

- log transforms for strictly positive skewed variables,

- square root for moderately skewed,

- reflections when needed to “flip” direction (as shown with age and black).

The goal is not “making everything normal,” but making linear modeling assumptions more reasonable.

library(tidyverse)

data_trans = data_ori %>% mutate(age= sqrt(max(age)+1-age),

black= log10(max(black)+1-black),

crim= log10(crim),

dis= sqrt(dis) )

plot_histogram(data_trans)

! How to transform data for normality.

A practical note: transformations should be applied thoughtfully and documented clearly, especially if you need interpretability. For example, a log-transformed predictor means coefficients represent changes per multiplicative change in the original scale.

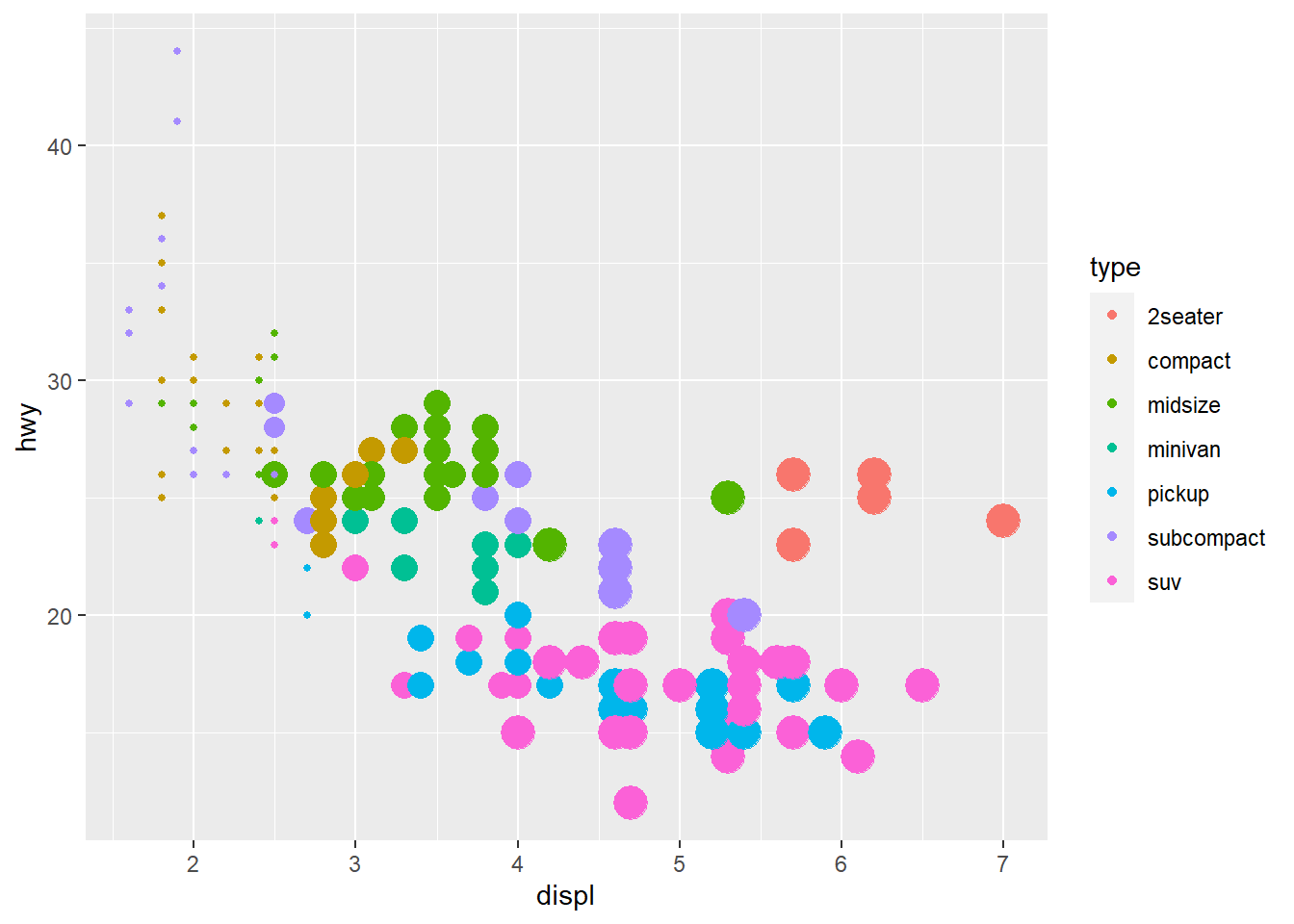

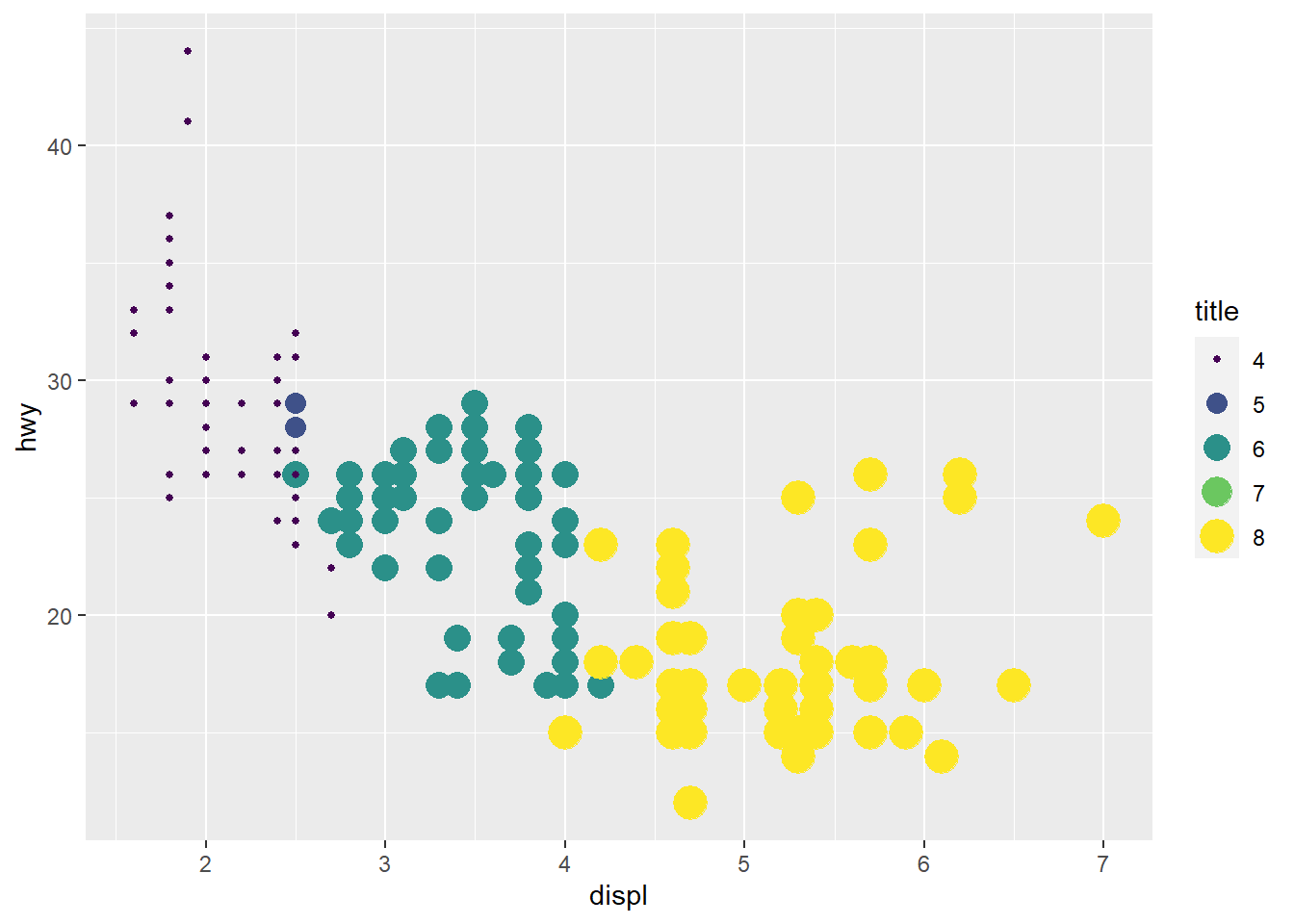

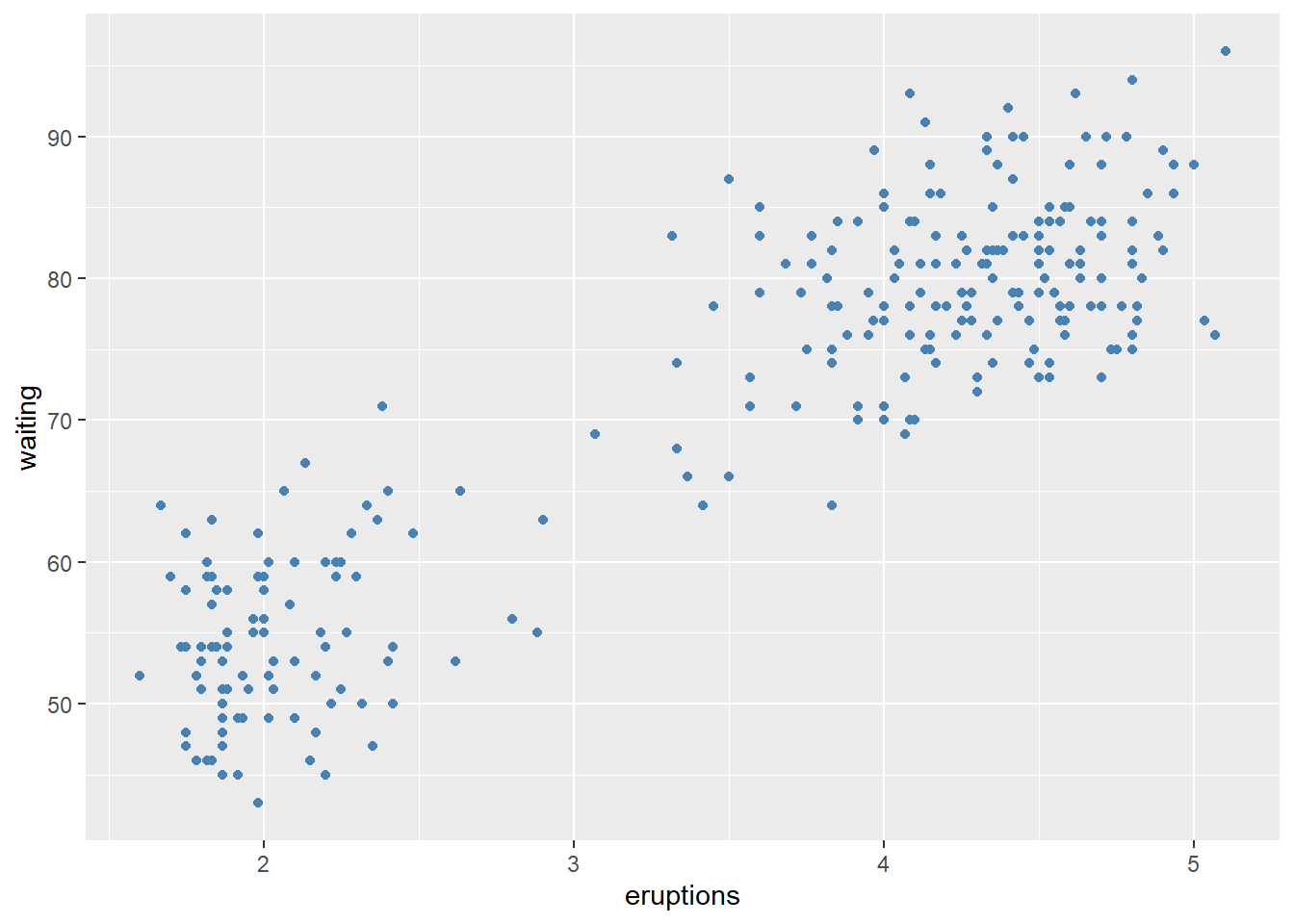

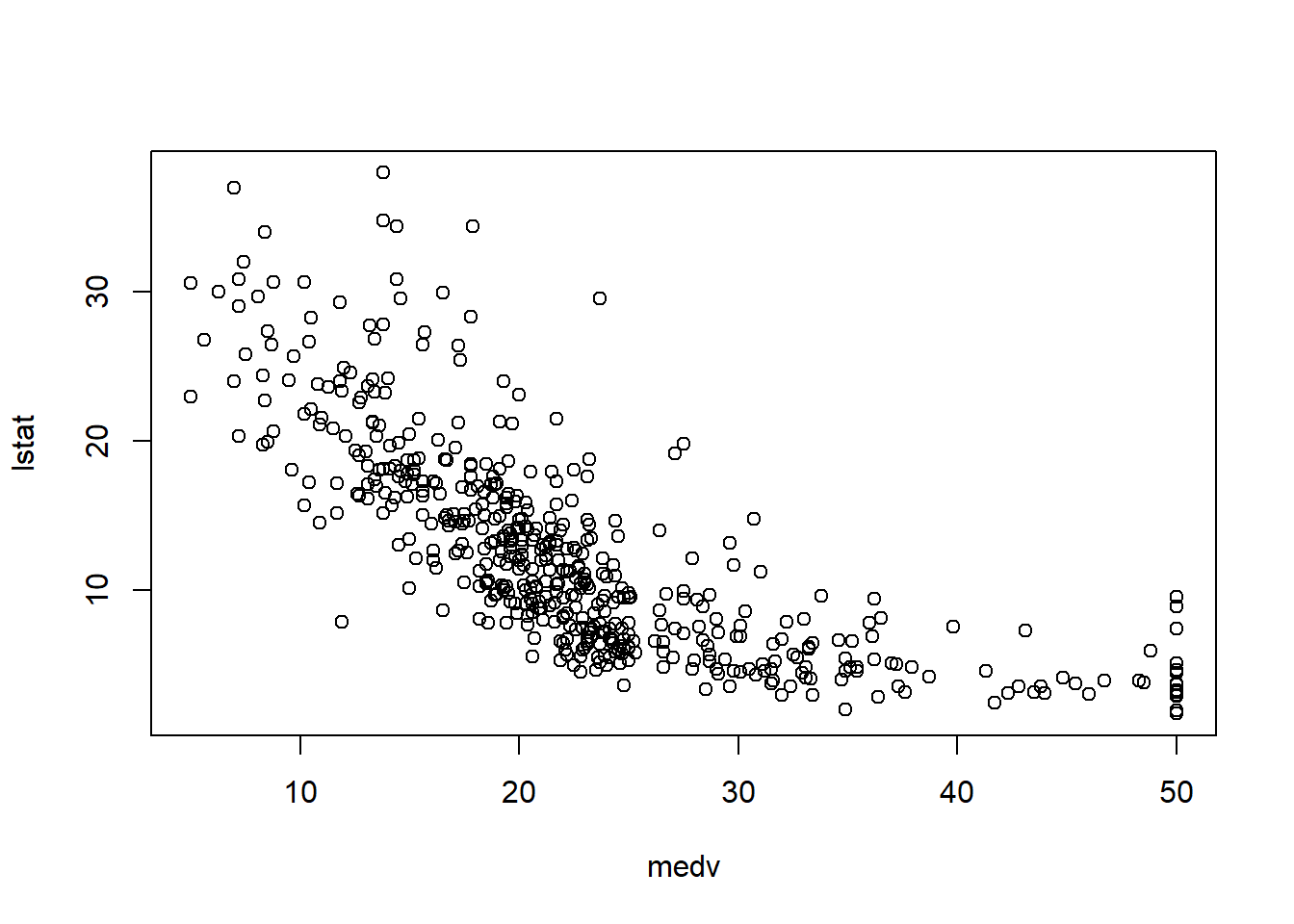

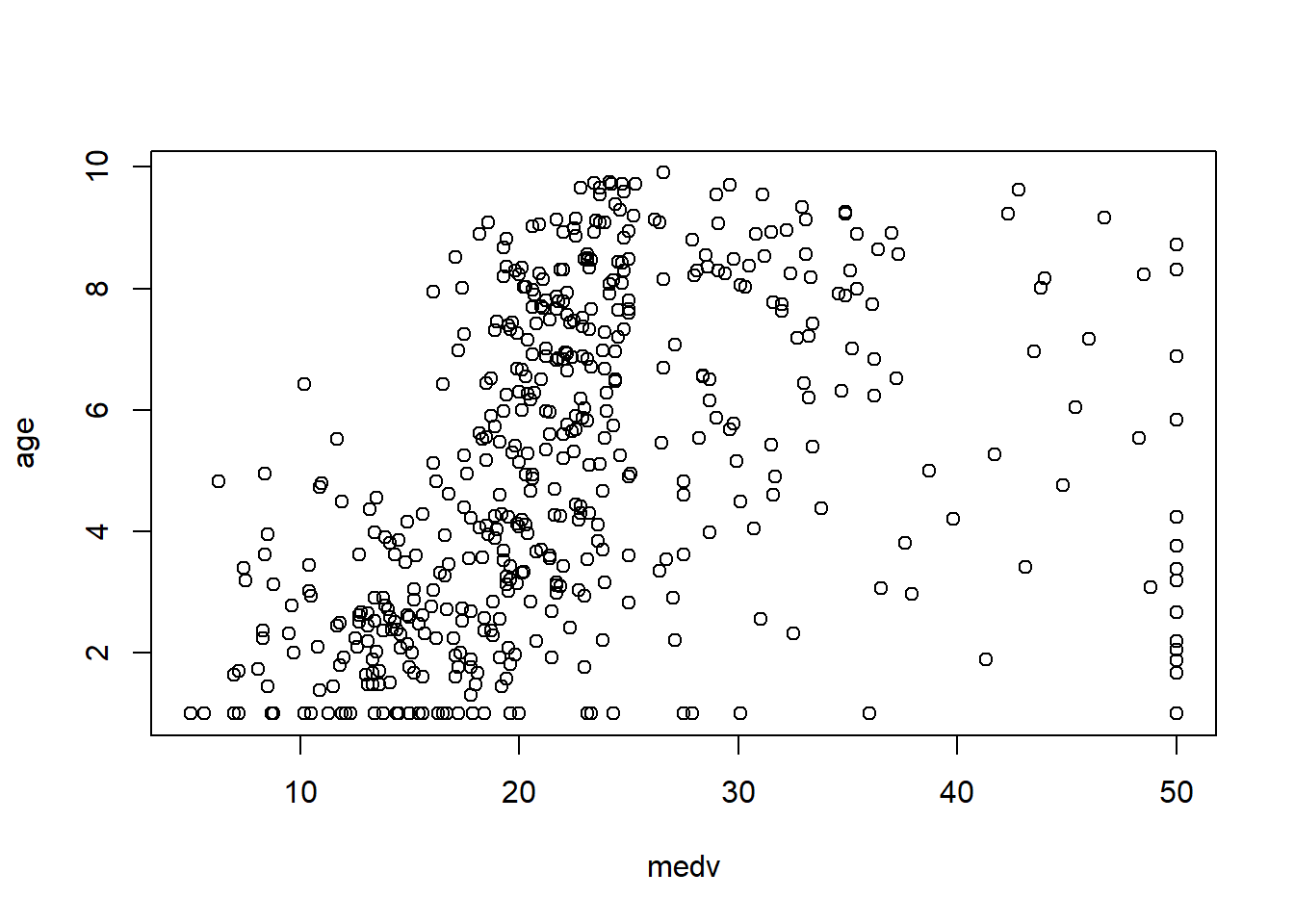

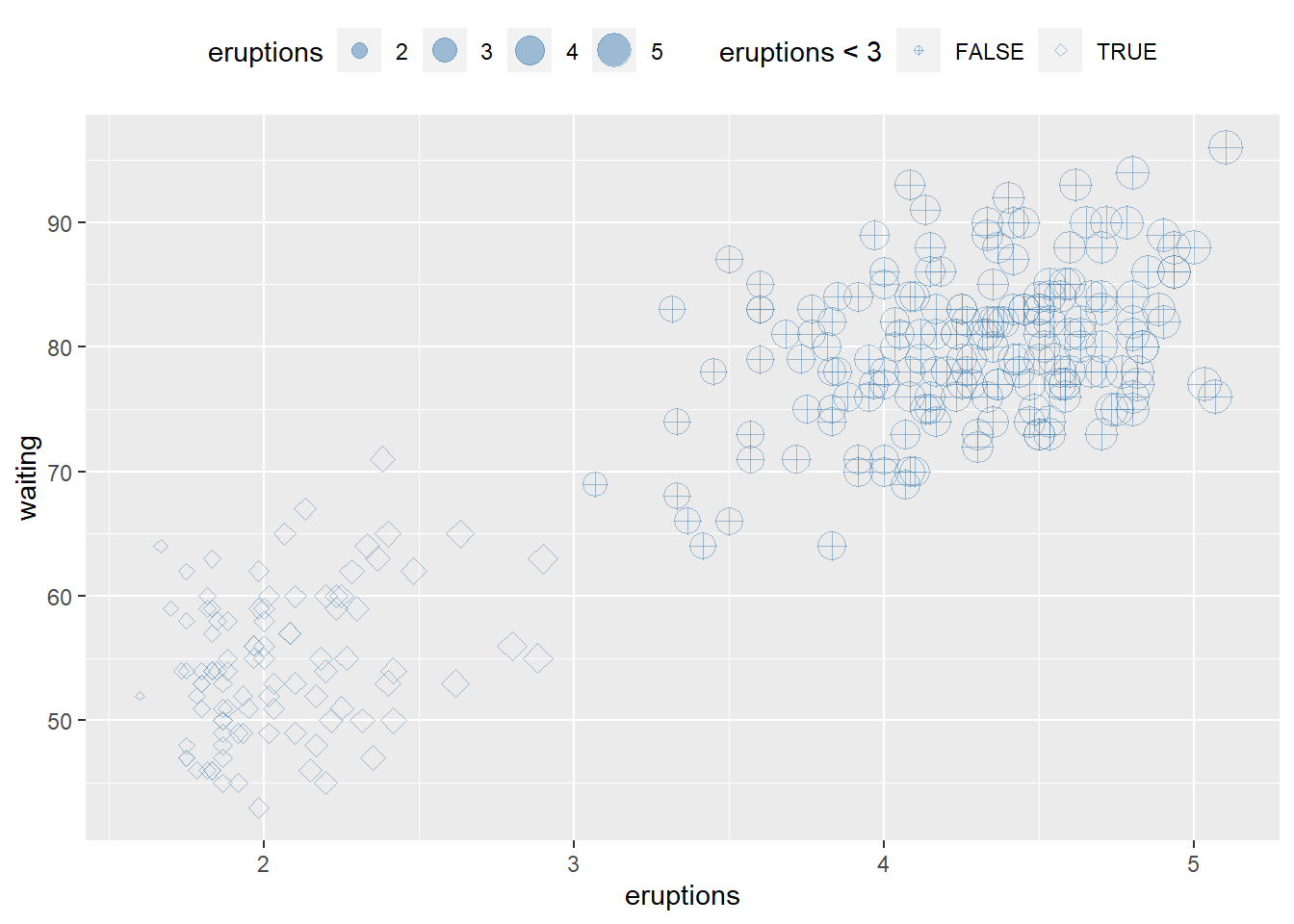

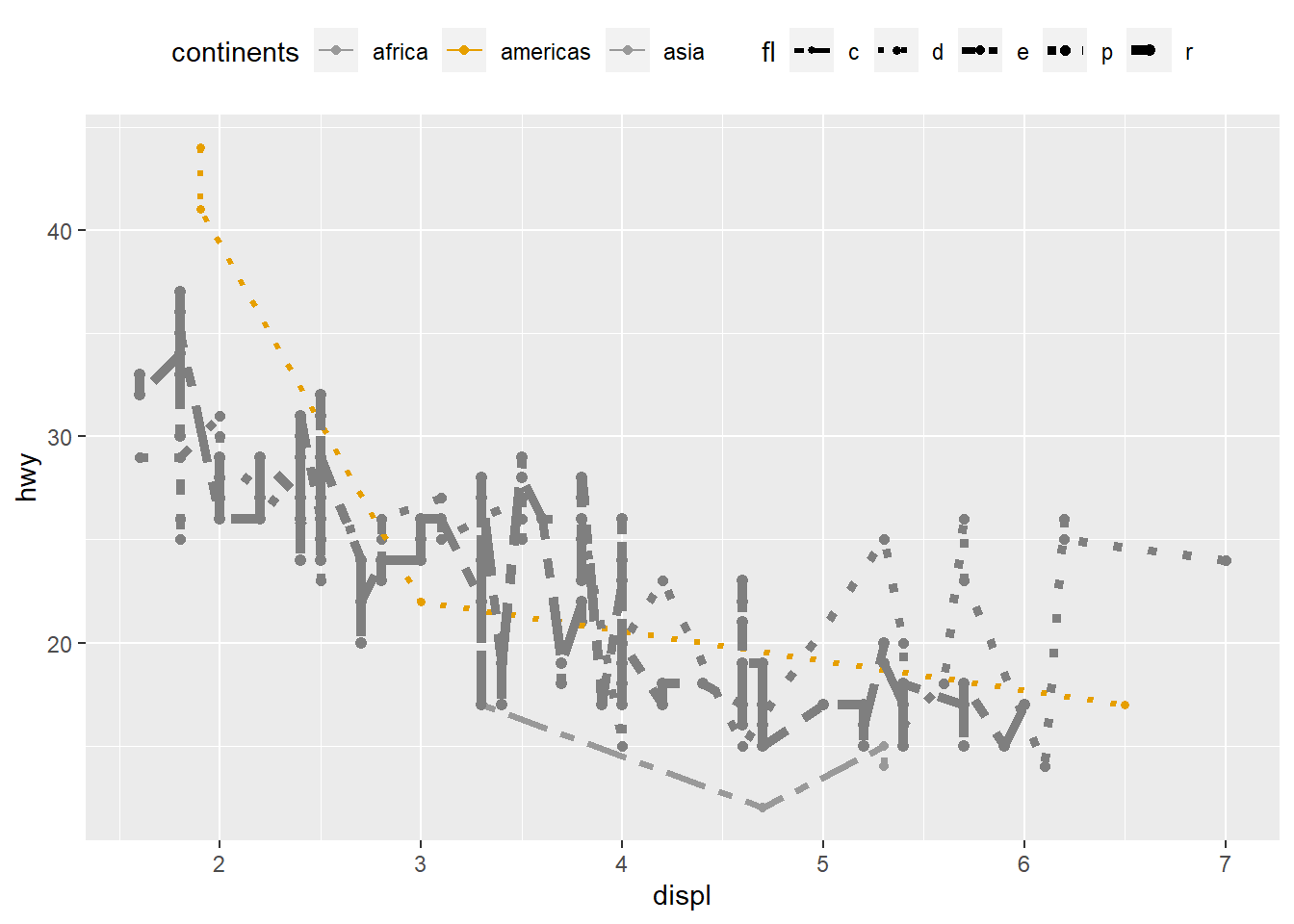

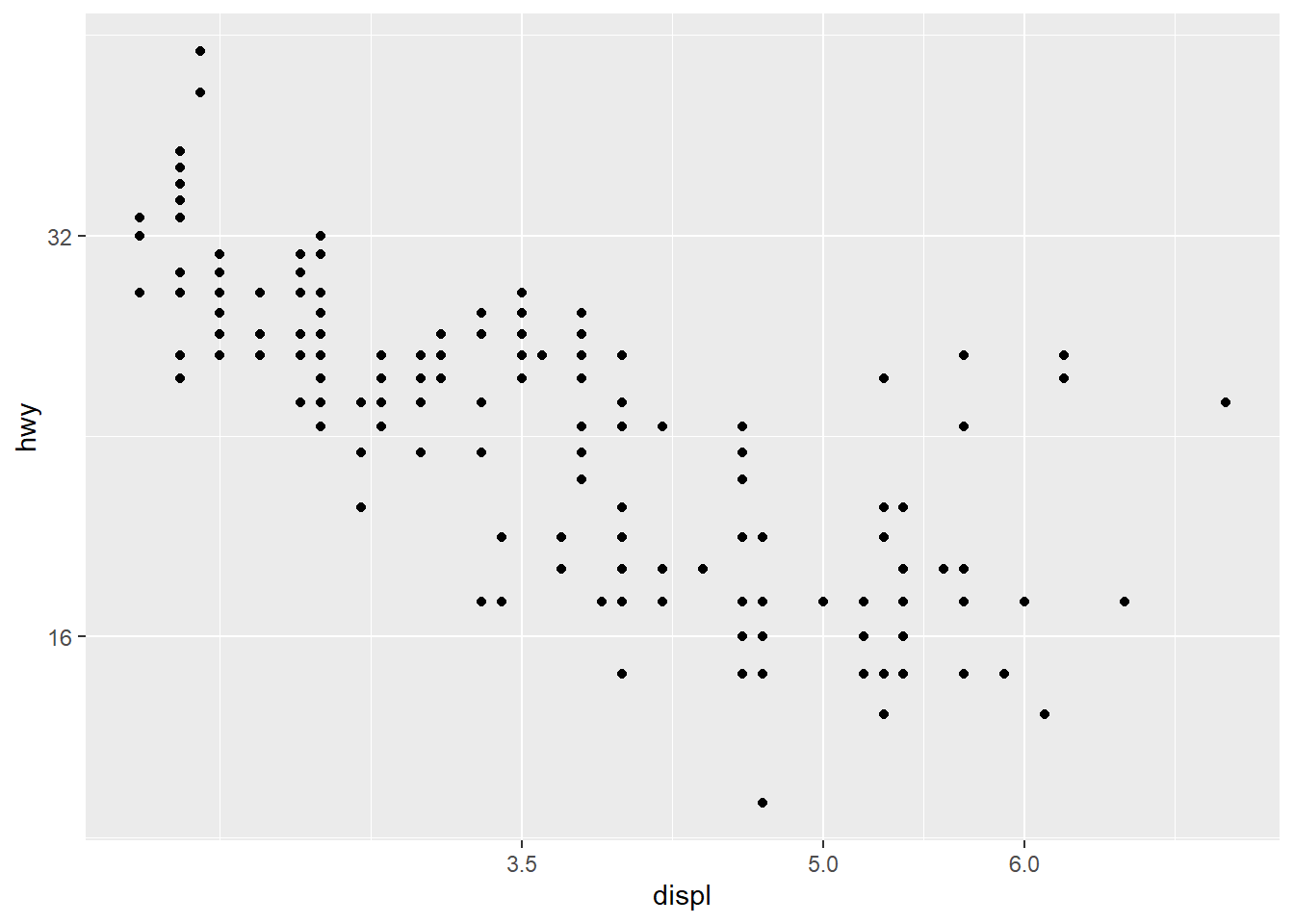

6.3.7 Check linearity between \(y\) and \(x\)

Before fitting a multivariable model, it helps to check whether key predictors have roughly linear relationships with the outcome. Scatterplots can immediately show: - curvature (suggesting polynomial terms), - clusters (suggesting interactions or stratification), - heteroscedasticity (spread changes with \(X\)).

In practice, the Boston housing dataset is known for strong relationships between medv and both lstat and rm, and these often show nonlinearity—motivating quadratic terms and interactions later.

6.3.8 Data imputation and normalization

This workflow demonstrates KNN imputation using caret::preProcess(method = "knnImpute"). Even if the dataset has no missing values, this section is included because missingness is common in applied work.

6.3.8.1 For original “data”

We store medv separately before preprocessing, then restore it afterward. This preserves the target variable while applying preprocessing to predictors.

library(caret)

# Create the knn imputation model on the training data

y=data_ori$medv

preProcess_missingdata_model <- preProcess(data_ori , method='knnImpute')

preProcess_missingdata_model## Created from 506 samples and 14 variables

##

## Pre-processing:

## - centered (14)

## - ignored (0)

## - 5 nearest neighbor imputation (14)

## - scaled (14)Then we apply the model and verify missingness is resolved. anyNA() is a quick binary check; in larger projects you may also compute missing rates by column.

# Use the imputation model to predict the values of missing data points

library(RANN) # required for knnInpute## Warning: package 'RANN' was built under R version 4.4.3## [1] FALSE6.3.8.2 For transformed “data2”

We repeat the same workflow for the transformed dataset. This creates a fair comparison between “original scale” and “transformed scale” modeling pipelines.

library(caret)

y2=data_trans$medv

# Create the knn imputation model on the training data

preProcess_missingdata_model2 <- preProcess(data_trans , method='knnImpute')

preProcess_missingdata_model2## Created from 506 samples and 14 variables

##

## Pre-processing:

## - centered (14)

## - ignored (0)

## - 5 nearest neighbor imputation (14)

## - scaled (14)# Use the imputation model to predict the values of missing data points

library(RANN) # required for knnInpute

data_trans <- predict(preProcess_missingdata_model2, newdata = data_trans )

anyNA(data_trans )## [1] FALSE6.3.9 Generate dummy variables

Categorical predictors must be encoded properly before modeling. In many workflows, converting variables to factor is enough because lm() automatically handles factors using dummy coding (with a reference level).

- also can do using

as.factorfunction for predictorsx

In real projects, pay attention to: - reference group selection, - whether categories are sparse, - and whether encoding must be consistent across train/test.

6.3.10 Splitting data into training and test data

A training/test split provides external-style evaluation: the model is fit on training data and assessed on held-out test data.

This helps detect overfitting, especially when you: - try many model variants, - add polynomial terms, - include interactions, - or apply variable selection procedures.

# Create the training and test datasets

set.seed(123)

# for original data

# Step 1: Get row numbers for the training data

trainRowNumbers <- createDataPartition(data_ori$medv, p=0.8, list=FALSE)

# Step 2: Create the training dataset

data <- data_ori[trainRowNumbers,]

# Step 3: Create the test dataset

testdata <- data_ori[-trainRowNumbers,]

# for transformed data

# Step 1: Get row numbers for the training data

trainRowNumbers2 <- createDataPartition(data_trans$medv, p=0.8, list=FALSE)

# Step 2: Create the training dataset

data2 <- data_trans[trainRowNumbers2,]

# Step 3: Create the test dataset

testdata2 <- data_trans[-trainRowNumbers2,]Practical note: createDataPartition() creates balanced partitions with respect to the outcome distribution, which can be helpful when the outcome is skewed.

6.3.11 Step regression

Stepwise regression is a common teaching tool and sometimes used in quick exploratory modeling. It iteratively adds/removes variables to optimize a criterion (typically AIC by default).

However, in serious applied work, stepwise selection can be unstable and can inflate type I error if you treat the final p-values as if selection never happened. Treat it as a screening tool, and validate with cross-validation.

## Start: AIC=1281.15

## medv ~ crim + zn + indus + chas + nox + rm + age + dis + rad +

## tax + ptratio + black + lstat

##

## Df Sum of Sq RSS AIC

## - black 1 0.17 8847.0 1279.2

## - age 1 7.07 8853.9 1279.5

## - crim 1 14.36 8861.2 1279.8

## - indus 1 24.08 8870.9 1280.3

## <none> 8846.8 1281.2

## - rad 1 103.22 8950.0 1283.9

## - tax 1 156.33 9003.1 1286.3

## - zn 1 198.34 9045.2 1288.2

## - chas 1 251.31 9098.1 1290.5

## - nox 1 692.00 9538.8 1309.8

## - ptratio 1 840.04 9686.9 1316.1

## - rm 1 965.90 9812.7 1321.3

## - dis 1 1349.41 10196.2 1336.9

## - lstat 1 2766.14 11613.0 1389.9

##

## Step: AIC=1279.16

## medv ~ crim + zn + indus + chas + nox + rm + age + dis + rad +

## tax + ptratio + lstat

##

## Df Sum of Sq RSS AIC

## - age 1 7.15 8854.1 1277.5

## - crim 1 14.32 8861.3 1277.8

## - indus 1 24.52 8871.5 1278.3

## <none> 8847.0 1279.2

## + black 1 0.17 8846.8 1281.2

## - rad 1 103.72 8950.7 1281.9

## - tax 1 157.40 9004.4 1284.3

## - zn 1 198.20 9045.2 1286.2

## - chas 1 251.25 9098.2 1288.6

## - nox 1 695.37 9542.4 1308.0

## - ptratio 1 850.76 9697.7 1314.5

## - rm 1 966.99 9814.0 1319.4

## - dis 1 1375.04 10222.0 1336.0

## - lstat 1 2770.28 11617.3 1388.0

##

## Step: AIC=1277.49

## medv ~ crim + zn + indus + chas + nox + rm + dis + rad + tax +

## ptratio + lstat

##

## Df Sum of Sq RSS AIC

## - crim 1 18.36 8872.5 1276.3

## - indus 1 25.56 8879.7 1276.7

## <none> 8854.1 1277.5

## + age 1 7.15 8847.0 1279.2

## + black 1 0.26 8853.9 1279.5

## - rad 1 97.20 8951.3 1279.9

## - tax 1 152.93 9007.1 1282.5

## - zn 1 196.76 9050.9 1284.4

## - chas 1 255.17 9109.3 1287.0

## - nox 1 694.68 9548.8 1306.2

## - ptratio 1 843.66 9697.8 1312.5

## - rm 1 1023.40 9877.5 1320.0

## - dis 1 1633.60 10487.7 1344.4

## - lstat 1 2978.57 11832.7 1393.5

##

## Step: AIC=1276.33

## medv ~ zn + indus + chas + nox + rm + dis + rad + tax + ptratio +

## lstat

##

## Df Sum of Sq RSS AIC

## - indus 1 21.92 8894.4 1275.3

## <none> 8872.5 1276.3

## + crim 1 18.36 8854.1 1277.5

## + age 1 11.19 8861.3 1277.8

## + black 1 0.13 8872.4 1278.3

## - tax 1 158.39 9030.9 1281.5

## - zn 1 180.19 9052.7 1282.5

## - rad 1 212.19 9084.7 1284.0

## - chas 1 249.50 9122.0 1285.6

## - nox 1 689.33 9561.8 1304.8

## - ptratio 1 873.78 9746.3 1312.6

## - rm 1 1025.43 9897.9 1318.8

## - dis 1 1701.37 10573.9 1345.7

## - lstat 1 2996.77 11869.3 1392.8

##

## Step: AIC=1275.34

## medv ~ zn + chas + nox + rm + dis + rad + tax + ptratio + lstat

##

## Df Sum of Sq RSS AIC

## <none> 8894.4 1275.3

## + indus 1 21.92 8872.5 1276.3

## + crim 1 14.72 8879.7 1276.7

## + age 1 11.89 8882.5 1276.8

## + black 1 0.00 8894.4 1277.3

## - zn 1 206.52 9100.9 1282.7

## - chas 1 237.50 9131.9 1284.1

## - tax 1 281.42 9175.8 1286.0

## - rad 1 293.27 9187.7 1286.5

## - nox 1 800.54 9695.0 1308.4

## - ptratio 1 929.05 9823.5 1313.8

## - rm 1 1083.34 9977.8 1320.1

## - dis 1 1706.94 10601.4 1344.8

## - lstat 1 3024.07 11918.5 1392.5##

## Call:

## lm(formula = medv ~ zn + chas + nox + rm + dis + rad + tax +

## ptratio + lstat, data = data2)

##

## Coefficients:

## (Intercept) zn chas nox rm dis

## 22.509 1.016 0.759 -2.764 2.176 -3.970

## rad tax ptratio lstat

## 2.119 -2.317 -1.991 -4.2246.3.12 Create a model after selecting variables

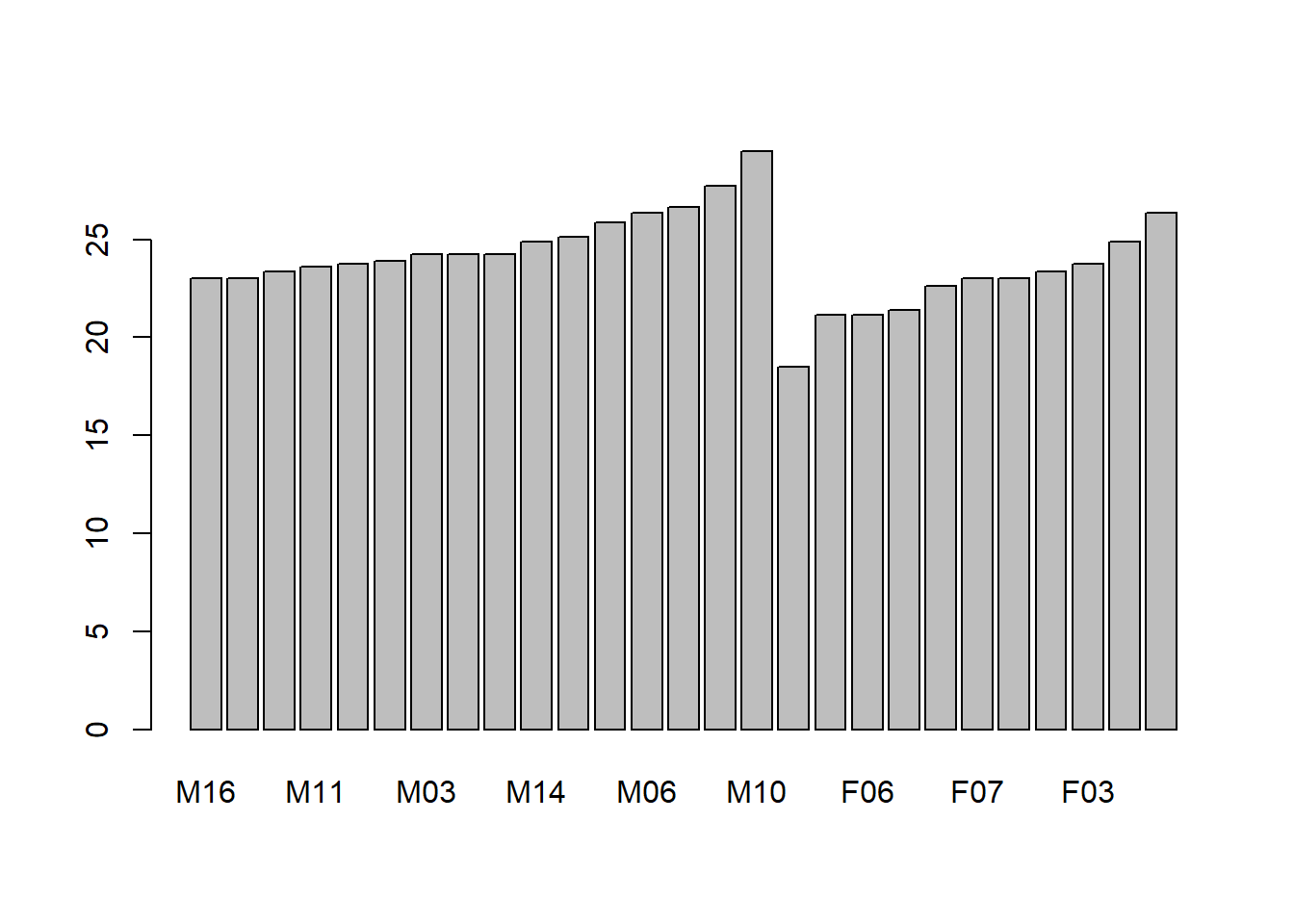

After variable selection (or based on domain knowledge), we fit a parsimonious model and inspect its statistical summary.

The summary() output gives:

- coefficient estimates,

- standard errors and t-tests,

- residual standard error,

- \(R^2\) and adjusted \(R^2\),

- overall F-statistic.

model_trasf <- lm(formula = medv ~ zn + chas + nox + rm + dis + rad + tax +

ptratio + lstat, data = data2)

summary(model_trasf)##

## Call:

## lm(formula = medv ~ zn + chas + nox + rm + dis + rad + tax +

## ptratio + lstat, data = data2)

##

## Residuals:

## Min 1Q Median 3Q Max

## -13.2923 -2.4690 -0.5086 1.6269 24.5813

##

## Coefficients:

## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 22.5090 0.2352 95.718 < 2e-16 ***

## zn 1.0161 0.3347 3.036 0.002554 **

## chas 0.7590 0.2331 3.256 0.001227 **

## nox -2.7640 0.4624 -5.978 5.05e-09 ***

## rm 2.1760 0.3129 6.954 1.47e-11 ***

## dis -3.9697 0.4548 -8.729 < 2e-16 ***

## rad 2.1194 0.5858 3.618 0.000335 ***

## tax -2.3171 0.6538 -3.544 0.000441 ***

## ptratio -1.9909 0.3092 -6.440 3.47e-10 ***

## lstat -4.2244 0.3636 -11.618 < 2e-16 ***

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## Residual standard error: 4.733 on 397 degrees of freedom

## Multiple R-squared: 0.7187, Adjusted R-squared: 0.7123

## F-statistic: 112.7 on 9 and 397 DF, p-value: < 2.2e-16At this stage, interpretability matters: each coefficient reflects the effect of that predictor holding others constant, assuming linearity and correct specification.

6.3.13 Multicollinearity checking

Multicollinearity inflates standard errors and makes coefficient estimates unstable. Variance inflation factor (VIF) is a standard diagnostic: - VIF ≈ 1: no collinearity - VIF moderately large: correlation among predictors - Very large VIF: serious instability

## zn chas nox rm dis rad tax ptratio

## 2.024269 1.044796 4.074241 1.721080 3.759267 6.008751 7.469414 1.743359

## lstat

## 2.372125In practice, multicollinearity is common in socioeconomic variables and in biomedical lab panels. If VIF is high, consider: - removing redundant variables, - combining variables (indexes), - penalized regression methods (ridge/lasso).

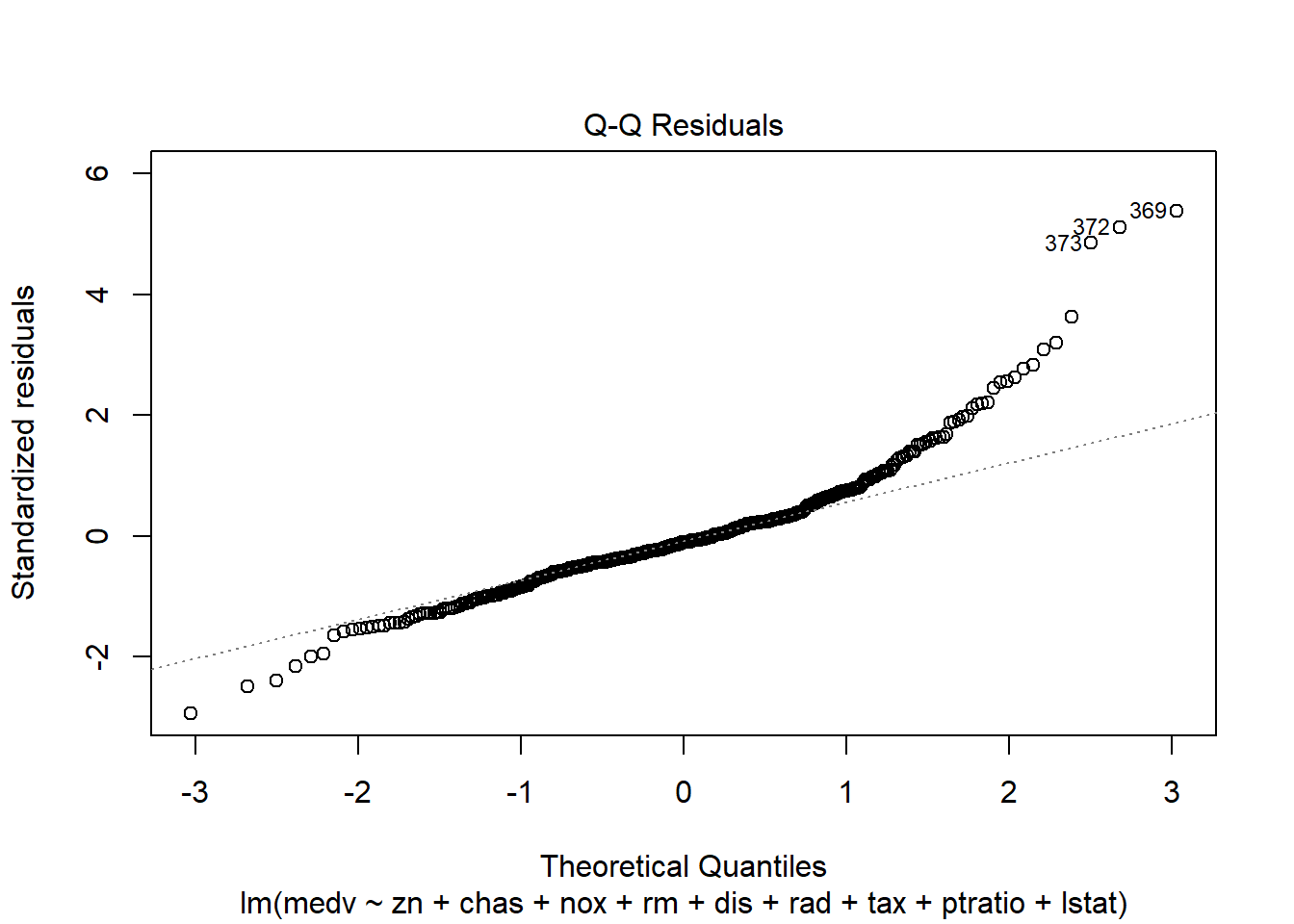

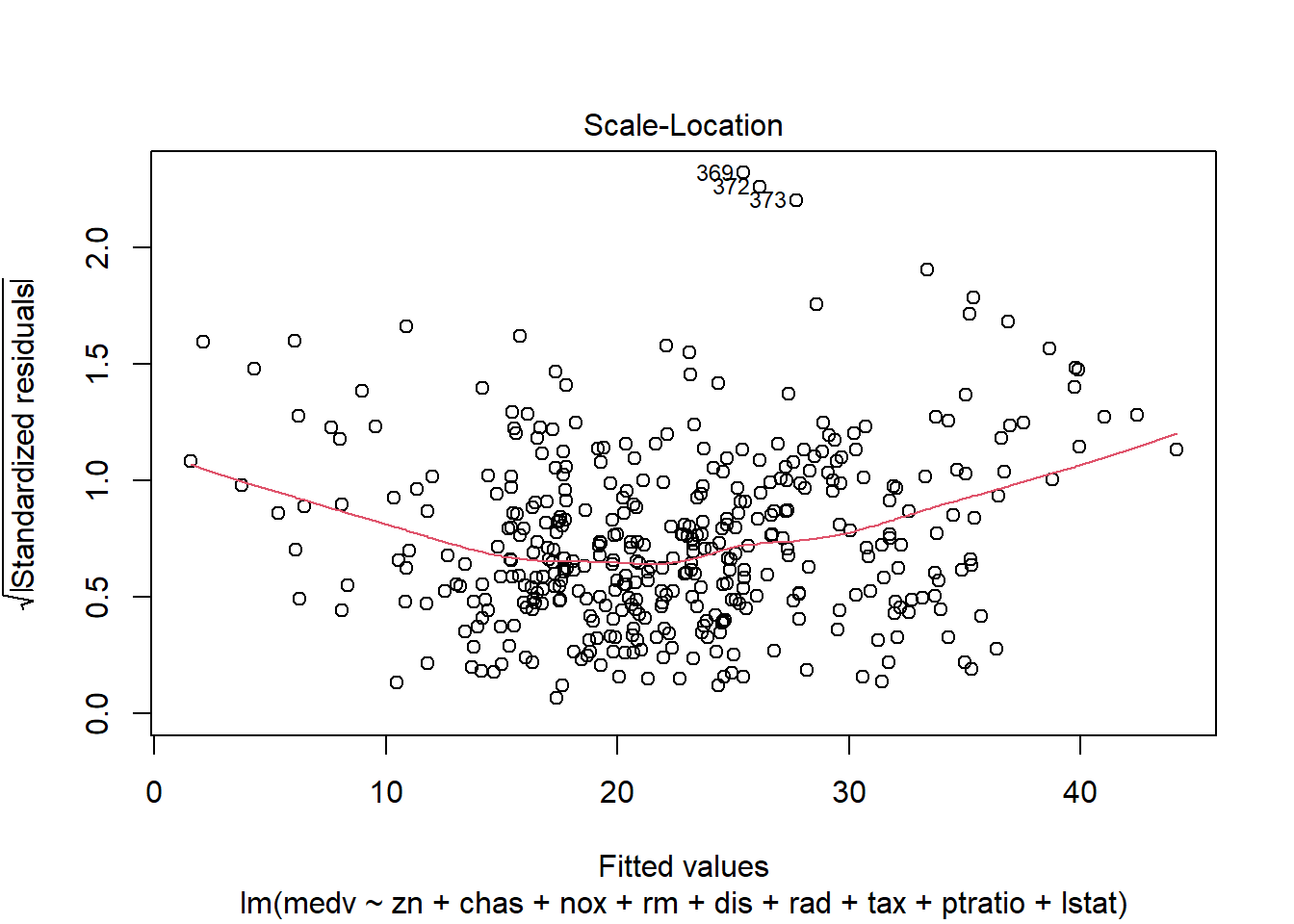

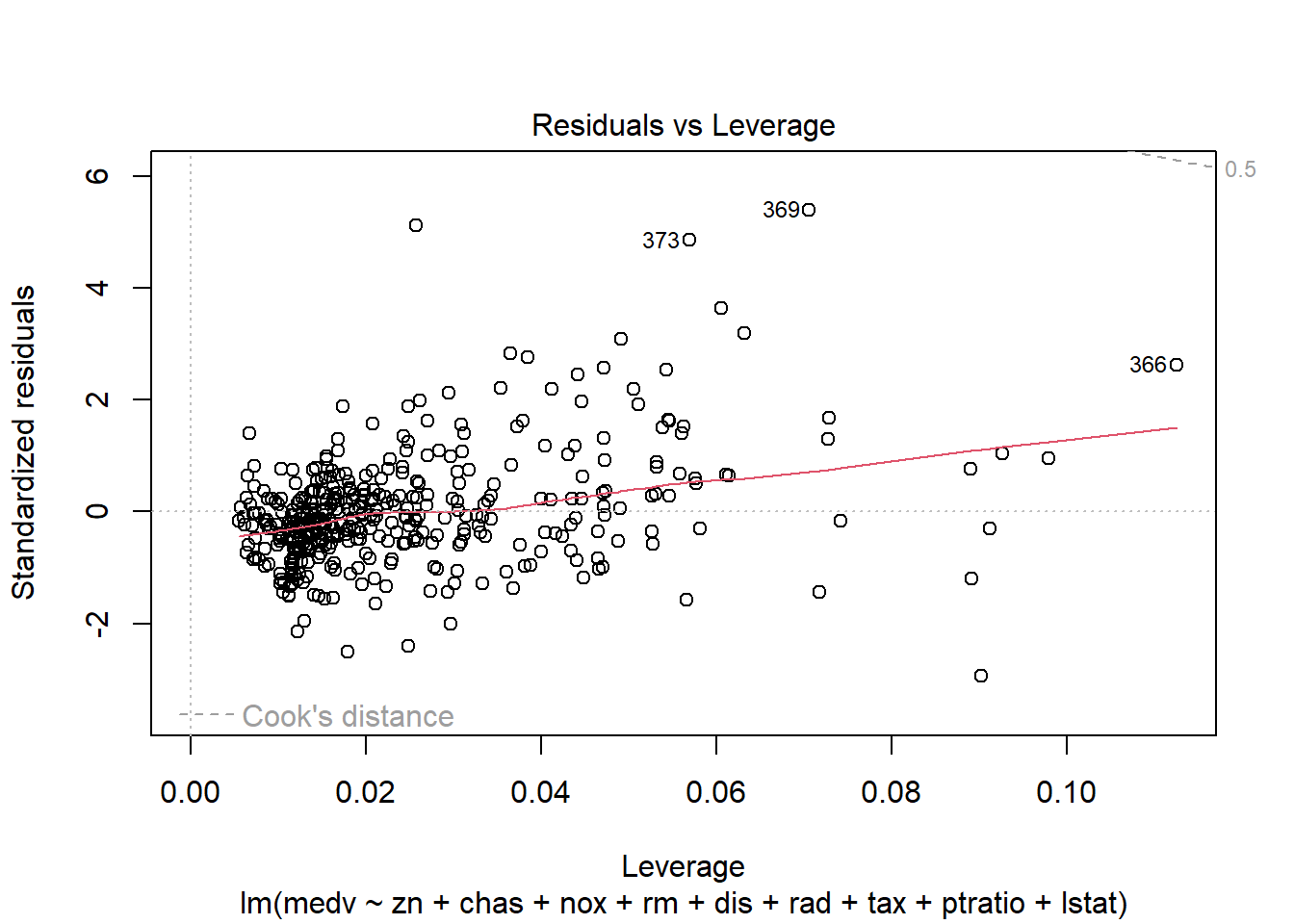

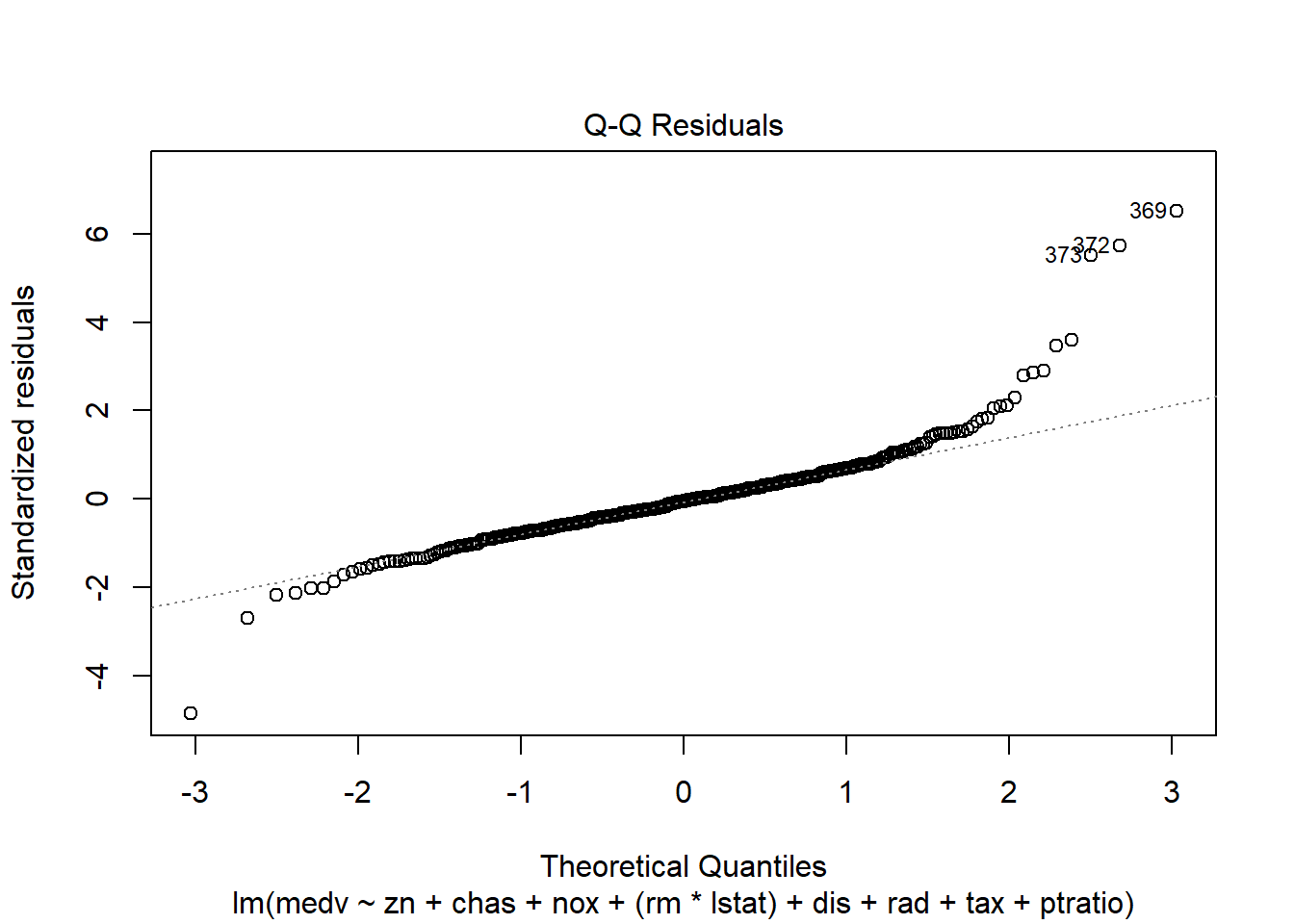

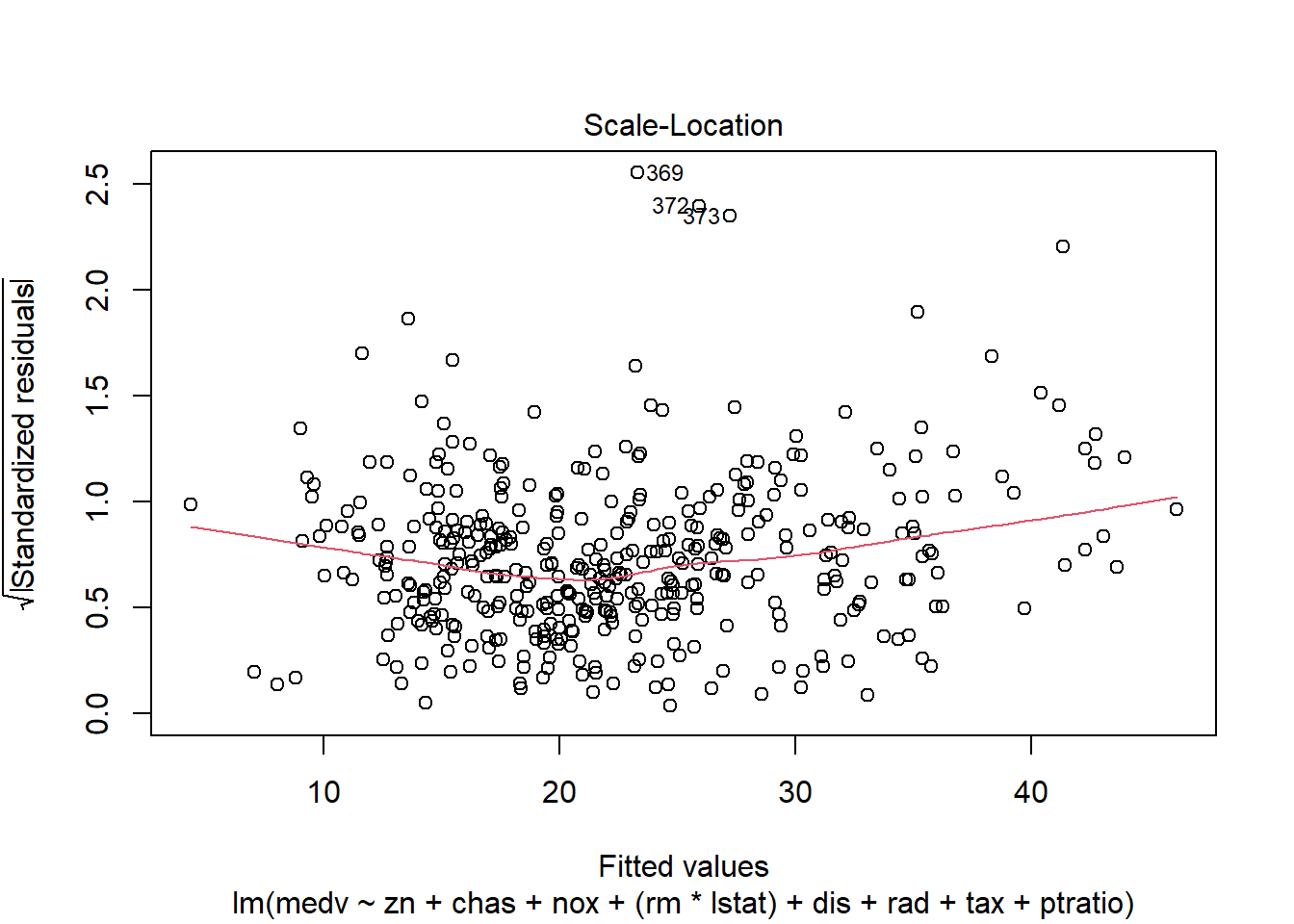

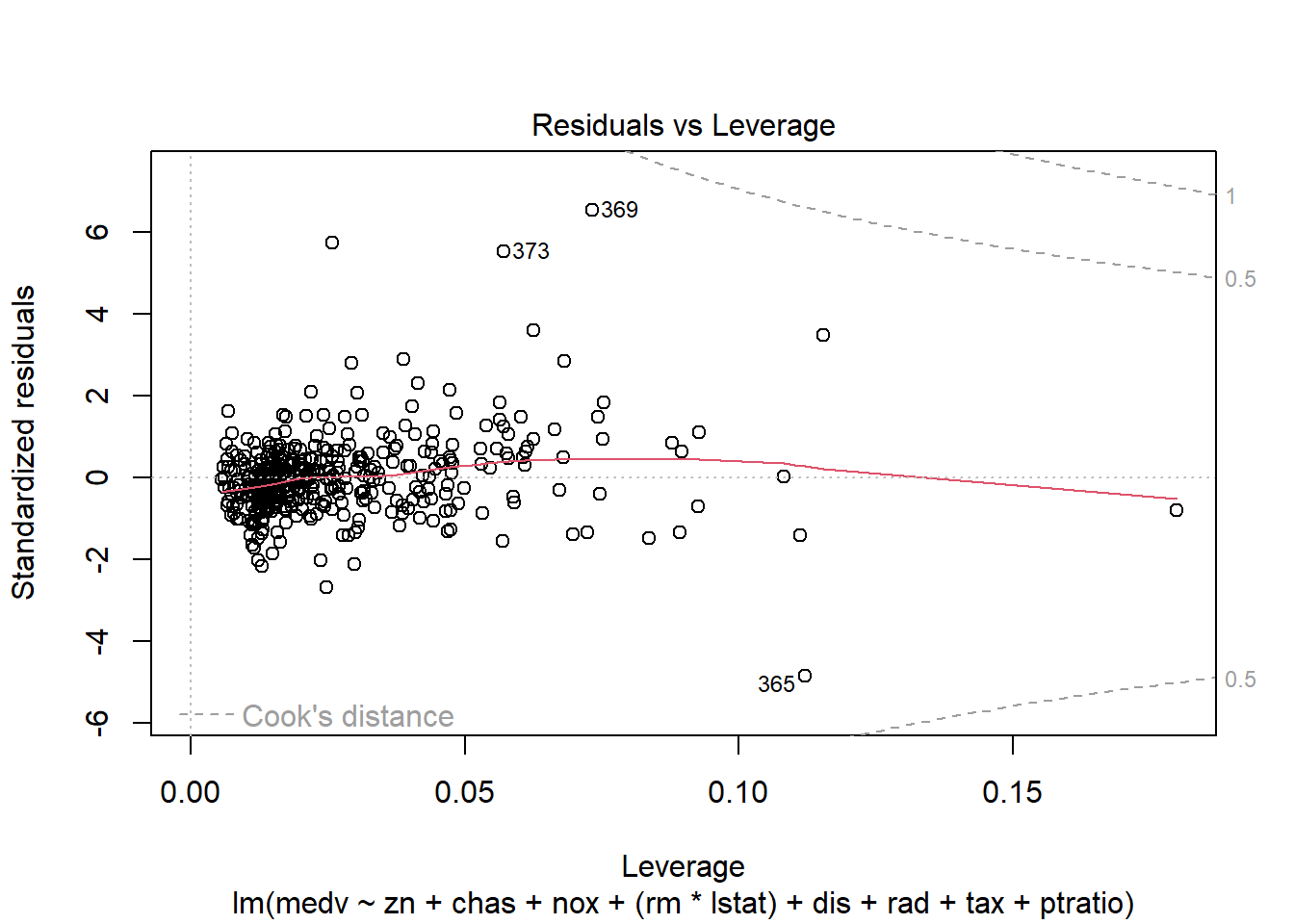

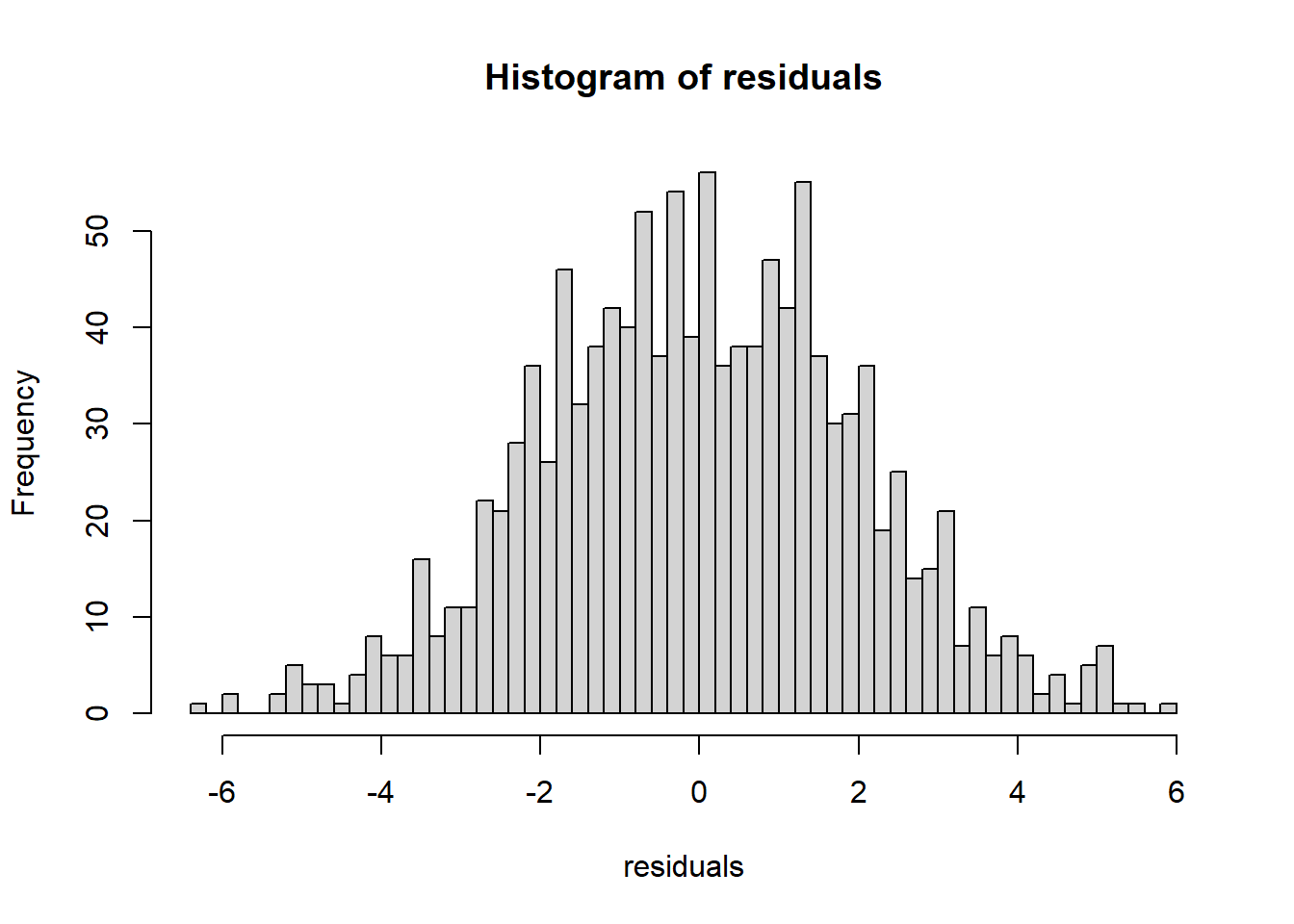

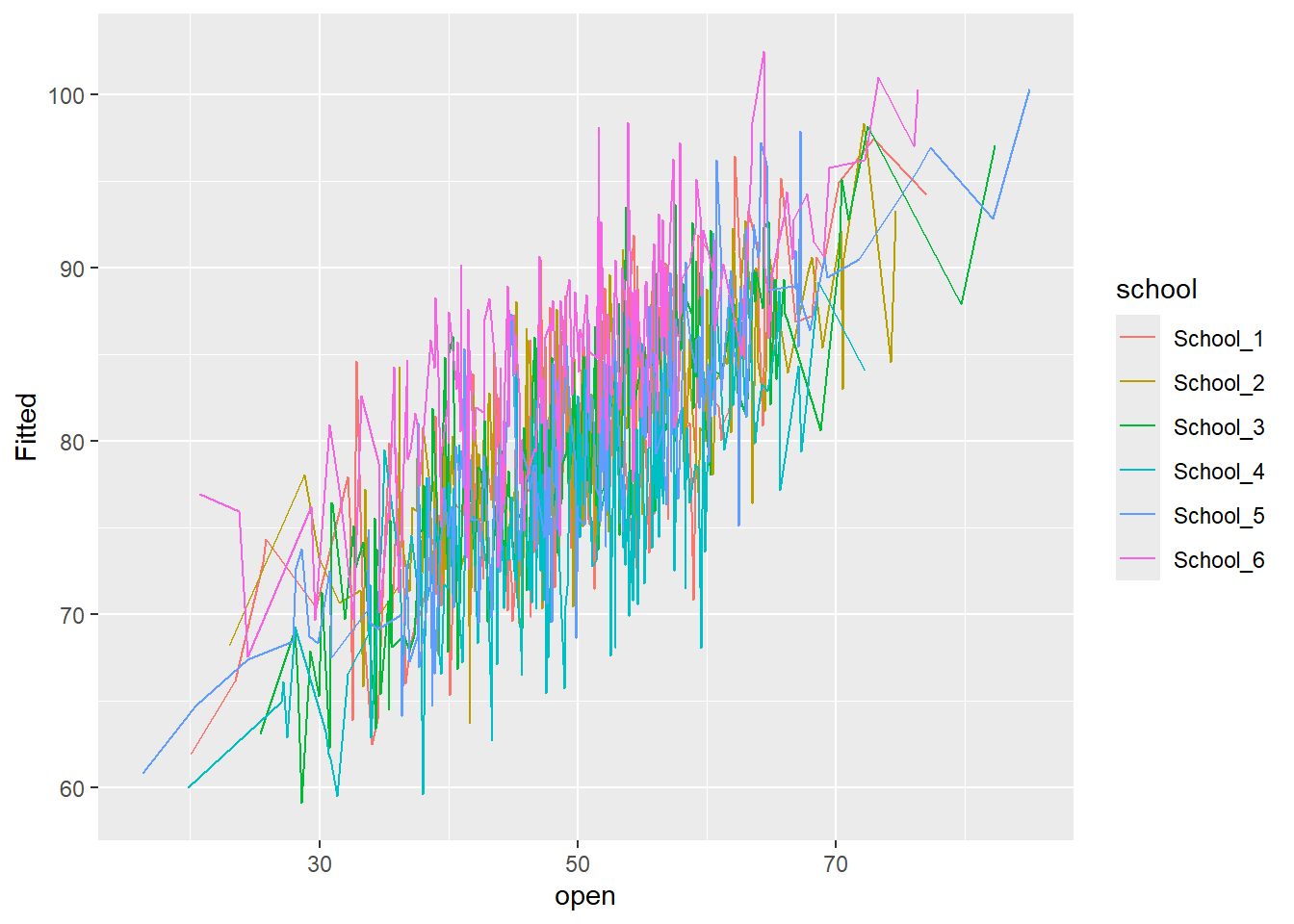

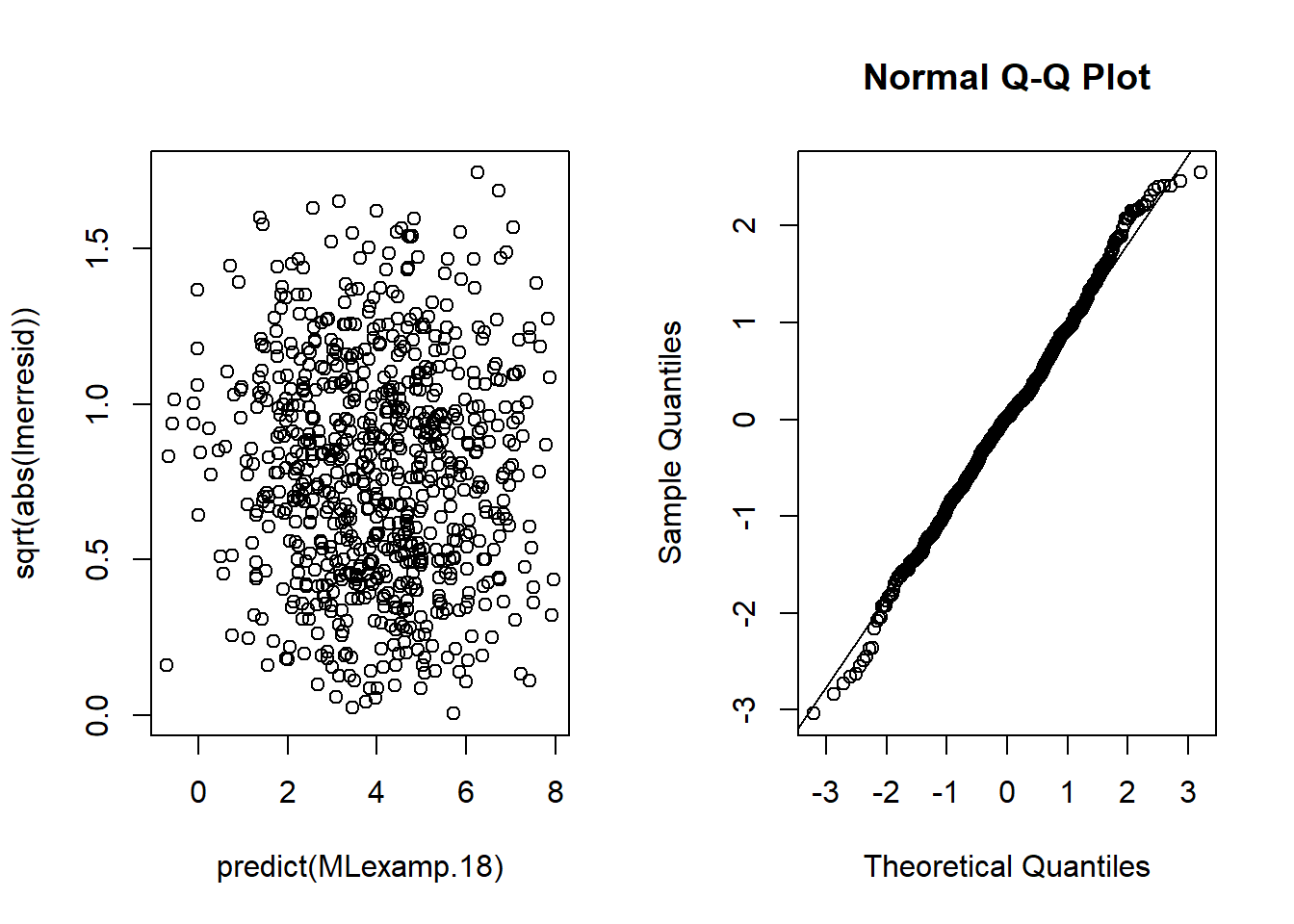

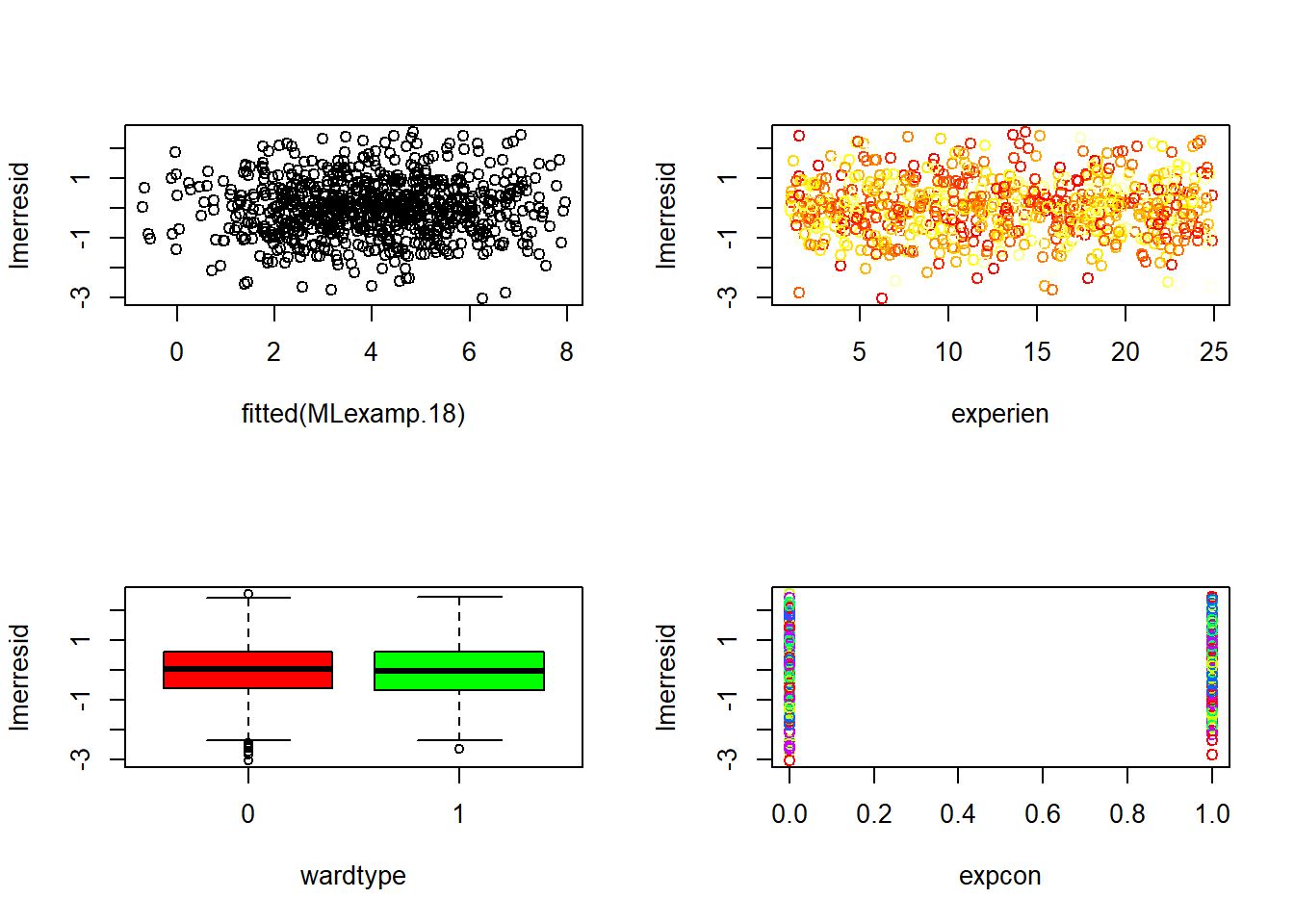

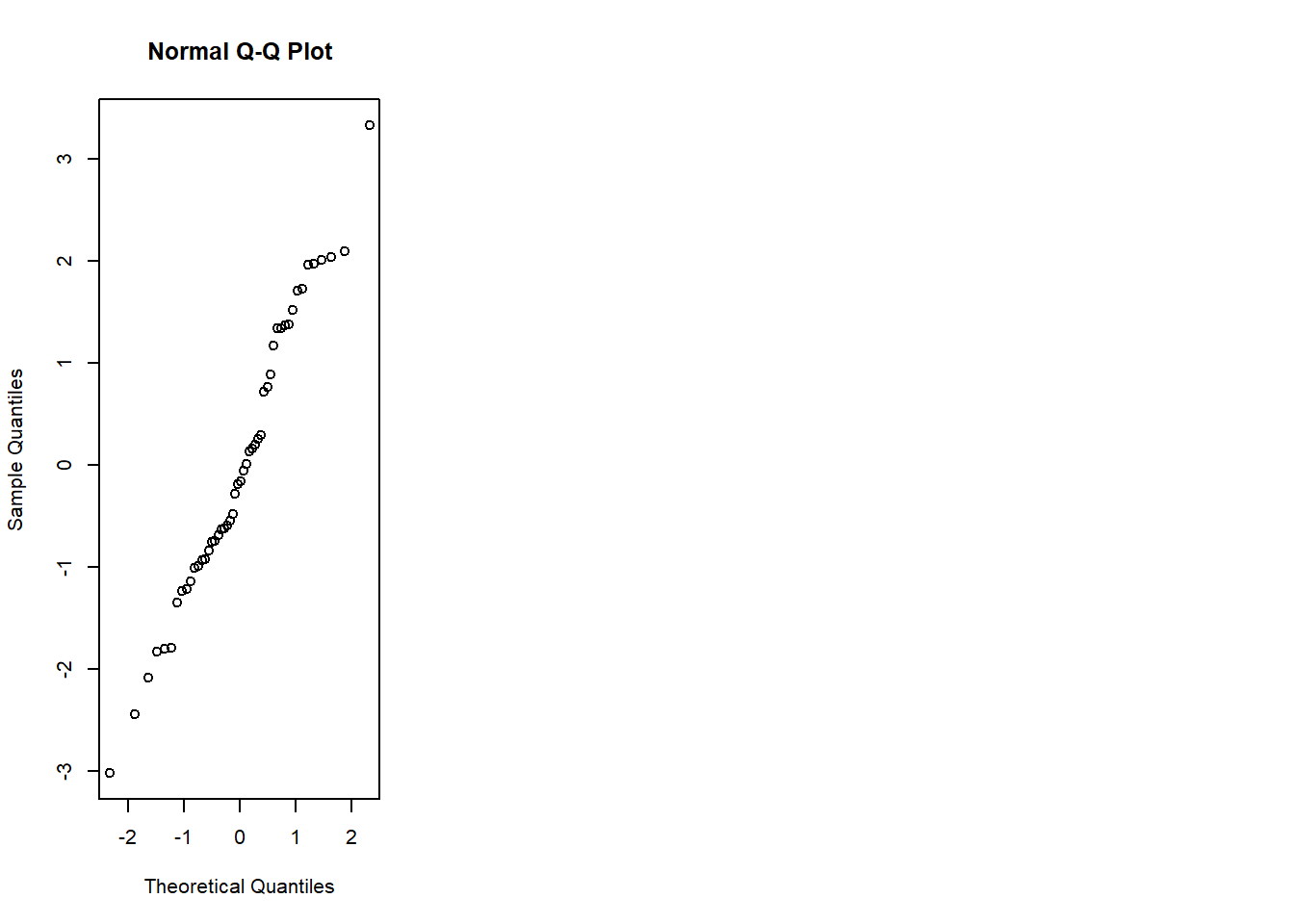

6.3.14 Plot model to check assumptions

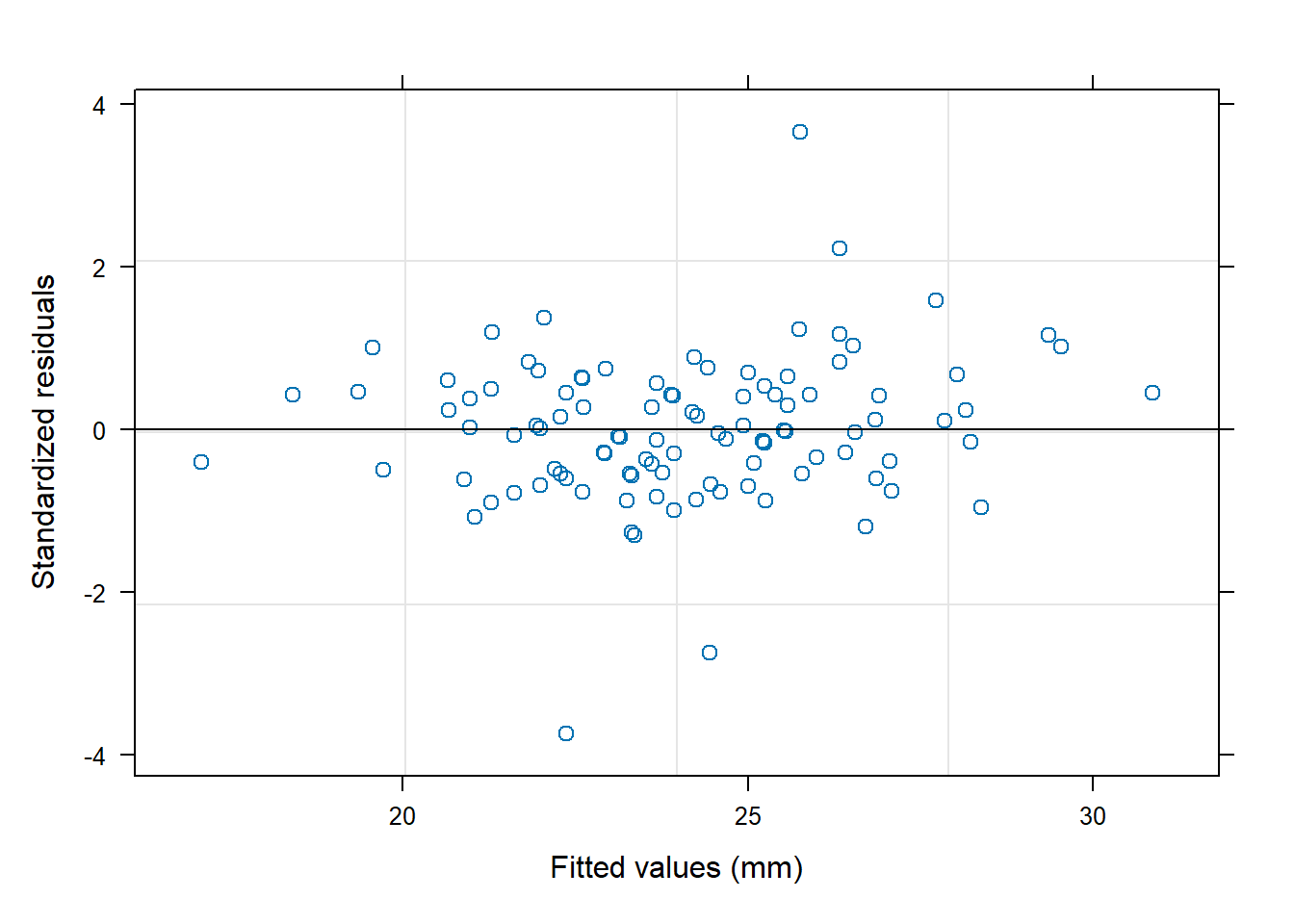

The default plot(lm_object) produces key diagnostic plots:

- residuals vs fitted (linearity, heteroscedasticity),

- normal Q-Q (normality of residuals),

- scale-location (variance stability),

- residuals vs leverage (influential points).

6.3.14.2 F test of model

ANOVA for the fitted model provides model-level significance and decomposition of sums of squares.

| Df | Sum Sq | Mean Sq | F value | Pr(>F) | |

|---|---|---|---|---|---|

| zn | 1 | 4019.0308 | 4019.03076 | 179.38831 | 0e+00 |

| chas | 1 | 1011.5766 | 1011.57663 | 45.15144 | 0e+00 |

| nox | 1 | 2785.8086 | 2785.80857 | 124.34378 | 0e+00 |

| rm | 1 | 8487.0727 | 8487.07268 | 378.81810 | 0e+00 |

| dis | 1 | 951.7774 | 951.77743 | 42.48232 | 0e+00 |

| rad | 1 | 558.8550 | 558.85503 | 24.94434 | 9e-07 |

| tax | 1 | 767.8718 | 767.87176 | 34.27374 | 0e+00 |

| ptratio | 1 | 1119.3945 | 1119.39453 | 49.96386 | 0e+00 |

| lstat | 1 | 3024.0665 | 3024.06652 | 134.97836 | 0e+00 |

| Residuals | 397 | 8894.4215 | 22.40408 | NA | NA |

6.3.14.3 Coefficients table

This is the standard regression table with estimates, SEs, t-statistics, and p-values.

| Estimate | Std. Error | t value | Pr(>|t|) | |

|---|---|---|---|---|

| (Intercept) | 22.5089571 | 0.2351584 | 95.718273 | 0.0000000 |

| zn | 1.0160975 | 0.3346703 | 3.036115 | 0.0025545 |

| chas | 0.7589909 | 0.2331142 | 3.255876 | 0.0012275 |

| nox | -2.7639762 | 0.4623875 | -5.977619 | 0.0000000 |

| rm | 2.1760130 | 0.3129267 | 6.953747 | 0.0000000 |

| dis | -3.9697228 | 0.4547944 | -8.728609 | 0.0000000 |

| rad | 2.1193795 | 0.5857829 | 3.618029 | 0.0003352 |

| tax | -2.3171470 | 0.6537962 | -3.544143 | 0.0004408 |

| ptratio | -1.9908948 | 0.3091666 | -6.439552 | 0.0000000 |

| lstat | -4.2244121 | 0.3636086 | -11.618019 | 0.0000000 |

6.3.14.4 Confidence intervals

Confidence intervals help quantify uncertainty around effect sizes and are generally more informative than p-values alone.

| 2.5 % | 97.5 % | |

|---|---|---|

| (Intercept) | 22.0466457 | 22.971269 |

| zn | 0.3581500 | 1.674045 |

| chas | 0.3006983 | 1.217283 |

| nox | -3.6730103 | -1.854942 |

| rm | 1.5608125 | 2.791214 |

| dis | -4.8638292 | -3.075616 |

| rad | 0.9677553 | 3.271004 |

| tax | -3.6024824 | -1.031812 |

| ptratio | -2.5987032 | -1.383086 |

| lstat | -4.9392512 | -3.509573 |

6.3.15 Add polynomial (quadratic) terms

When scatterplots suggest curvature, a quadratic term can capture nonlinearity without fully abandoning linear regression.

Here we add \(rm^2\) and \(lstat^2\). This often improves fit when relationships are curved.

model_trasf_poly <- lm(formula = medv ~ zn + chas + nox + I(rm^2) + dis + rad + tax +

ptratio + I(lstat^2), data = data2)

summary(model_trasf_poly)##

## Call:

## lm(formula = medv ~ zn + chas + nox + I(rm^2) + dis + rad + tax +

## ptratio + I(lstat^2), data = data2)

##

## Residuals:

## Min 1Q Median 3Q Max

## -19.0736 -3.4029 -0.6212 2.8340 29.4942

##

## Coefficients:

## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 22.2859 0.3526 63.212 < 2e-16 ***

## zn 1.5524 0.4096 3.790 0.000174 ***

## chas 0.9570 0.2834 3.377 0.000805 ***

## nox -4.4962 0.5455 -8.242 2.50e-15 ***

## I(rm^2) 1.5313 0.1589 9.637 < 2e-16 ***

## dis -3.3186 0.5644 -5.880 8.69e-09 ***

## rad 3.0529 0.7024 4.346 1.76e-05 ***

## tax -3.6647 0.7861 -4.662 4.28e-06 ***

## ptratio -2.6800 0.3706 -7.232 2.47e-12 ***

## I(lstat^2) -1.3170 0.1918 -6.865 2.56e-11 ***

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## Residual standard error: 5.763 on 397 degrees of freedom

## Multiple R-squared: 0.583, Adjusted R-squared: 0.5735

## F-statistic: 61.67 on 9 and 397 DF, p-value: < 2.2e-16Practical note: polynomial terms can improve fit but may complicate interpretation. Always validate that the improvement generalizes (e.g., CV).

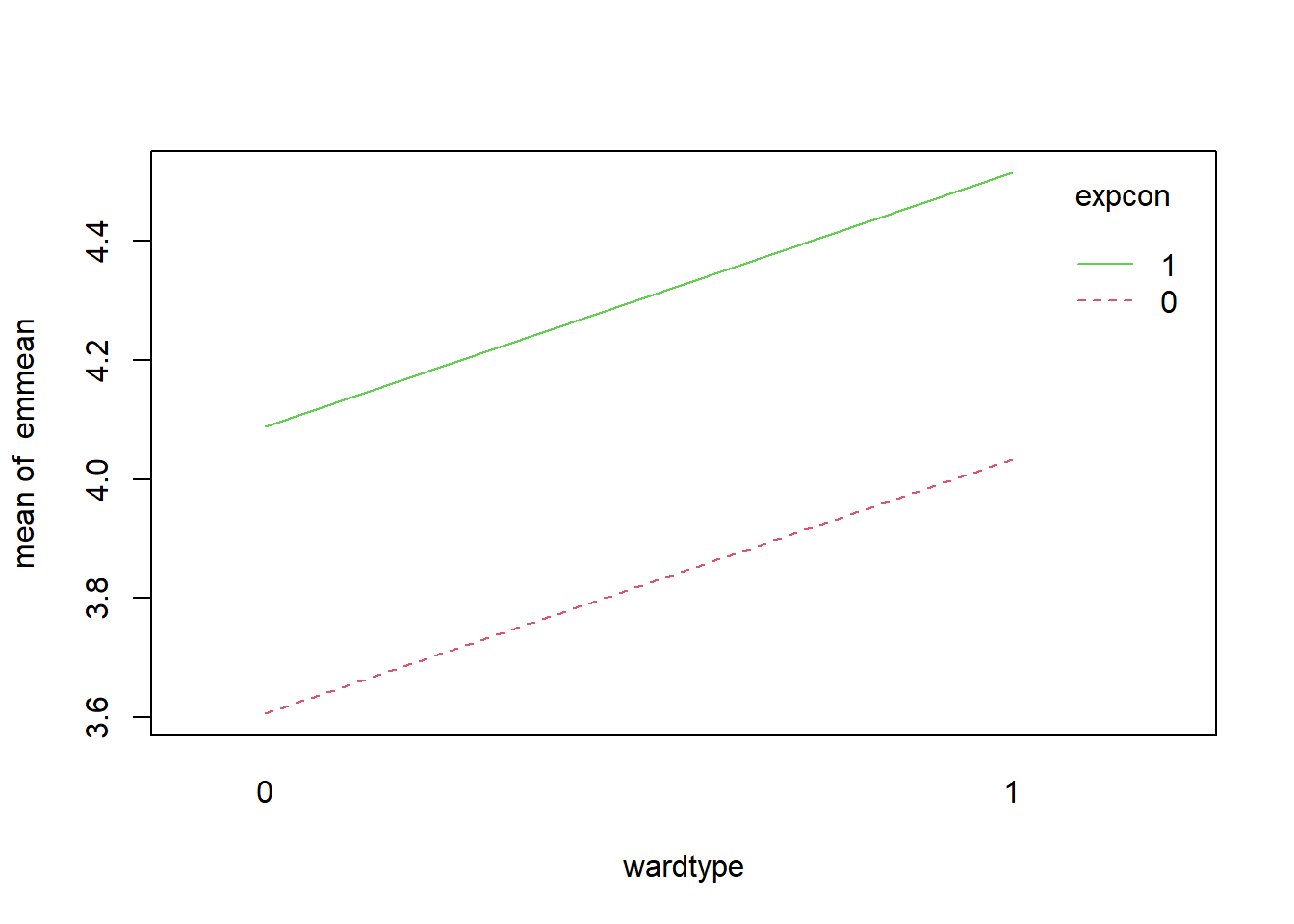

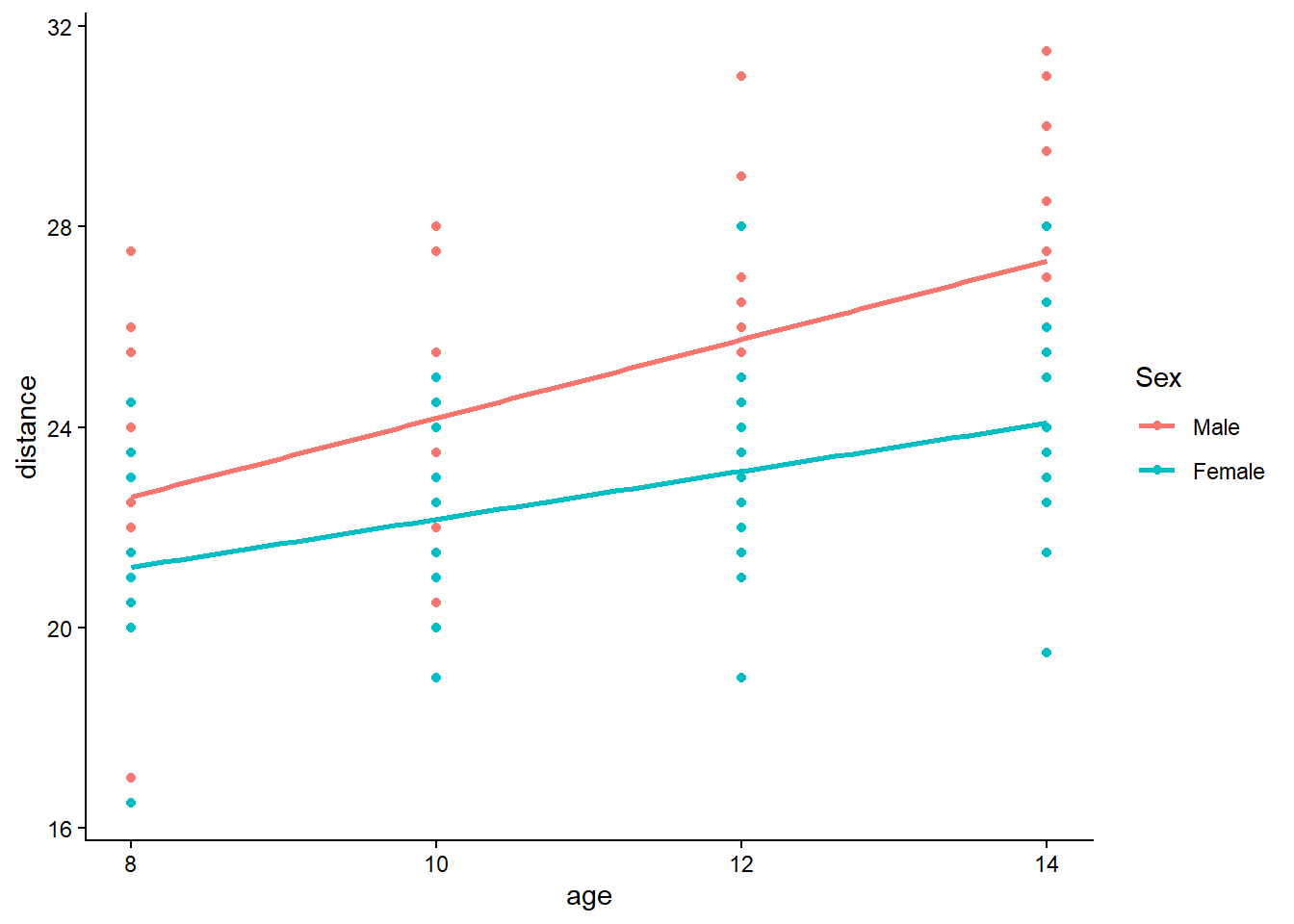

6.3.16 Add interaction terms

Interactions allow the effect of one predictor to depend on another. Conceptually, they represent effect modification.

Here we model the interaction \(rm \times lstat\). This is a meaningful interaction in Boston housing: the benefit of more rooms may differ across neighborhood socioeconomic status proxies.

rm and lstat

- R2 >0.7 indicates a good fit of the model

model_trasf_term <- lm(formula = medv ~ zn + chas + nox + (rm* lstat) + dis + rad + tax +

ptratio , data = data2)

summary(model_trasf_term)##

## Call:

## lm(formula = medv ~ zn + chas + nox + (rm * lstat) + dis + rad +

## tax + ptratio, data = data2)

##

## Residuals:

## Min 1Q Median 3Q Max

## -19.4595 -2.3458 -0.2389 1.7950 26.6992

##

## Coefficients:

## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 21.4297 0.2379 90.060 < 2e-16 ***

## zn 0.5221 0.3046 1.714 0.087296 .

## chas 0.6538 0.2095 3.120 0.001941 **

## nox -2.0295 0.4218 -4.812 2.13e-06 ***

## rm 1.6459 0.2860 5.754 1.75e-08 ***

## lstat -5.8715 0.3669 -16.002 < 2e-16 ***

## dis -3.1810 0.4161 -7.645 1.60e-13 ***

## rad 1.9251 0.5262 3.658 0.000288 ***

## tax -1.9187 0.5883 -3.261 0.001205 **

## ptratio -1.5554 0.2811 -5.534 5.70e-08 ***

## rm:lstat -1.8202 0.1852 -9.830 < 2e-16 ***

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## Residual standard error: 4.249 on 396 degrees of freedom

## Multiple R-squared: 0.7739, Adjusted R-squared: 0.7682

## F-statistic: 135.5 on 10 and 396 DF, p-value: < 2.2e-16Diagnostic plots remain essential because adding interactions can create leverage points and change residual structure.

6.3.17 Robust regression

Outliers and influential points can dominate OLS. Robust regression (rlm) downweights extreme residuals and can produce more stable estimates.

This is especially relevant when: - the dataset contains measurement errors, - there are heavy tails, - influential observations distort inference.

robust_model_term <- rlm(medv ~ zn + chas + nox + (rm* lstat) + dis + rad + tax +

ptratio , data = data2)

summary(robust_model_term)##

## Call: rlm(formula = medv ~ zn + chas + nox + (rm * lstat) + dis + rad +

## tax + ptratio, data = data2)

## Residuals:

## Min 1Q Median 3Q Max

## -20.90999 -1.74873 -0.09845 1.92931 33.87244

##

## Coefficients:

## Value Std. Error t value

## (Intercept) 20.9624 0.1656 126.5763

## zn 0.1998 0.2120 0.9424

## chas 0.5198 0.1458 3.5643

## nox -1.3458 0.2935 -4.5846

## rm 2.8773 0.1991 14.4524

## lstat -4.5706 0.2554 -17.8979

## dis -1.9208 0.2896 -6.6326

## rad 0.9005 0.3663 2.4586

## tax -1.7199 0.4095 -4.2004

## ptratio -1.2451 0.1956 -6.3652

## rm:lstat -1.9899 0.1289 -15.4398

##

## Residual standard error: 2.698 on 396 degrees of freedomPractical note: robust regression changes the objective function and standard inference is different. It’s often used for sensitivity analysis rather than as the sole primary model.

6.3.18 Create a model before transforming data

To understand the impact of transformation, we fit the analogous model on the original (non-transformed) training dataset. This helps assess whether transformation improves: - fit, - residual behavior, - predictive performance.

model_trasf_orig <- lm(formula = medv ~ zn + chas + nox + rm + dis + rad + tax +

ptratio + lstat, data = data)

summary(model_trasf_orig)##

## Call:

## lm(formula = medv ~ zn + chas + nox + rm + dis + rad + tax +

## ptratio + lstat, data = data)

##

## Residuals:

## Min 1Q Median 3Q Max

## -14.219 -2.729 -0.463 1.920 25.992

##

## Coefficients:

## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 22.5094 0.2430 92.619 < 2e-16 ***

## zn 0.8232 0.3754 2.193 0.028904 *

## chas 0.6582 0.2412 2.728 0.006652 **

## nox -1.9351 0.4727 -4.093 5.15e-05 ***

## rm 2.3985 0.3128 7.668 1.36e-13 ***

## dis -2.9289 0.4565 -6.416 3.99e-10 ***

## rad 2.2109 0.6348 3.483 0.000551 ***

## tax -2.1880 0.6896 -3.173 0.001627 **

## ptratio -2.0274 0.3254 -6.230 1.19e-09 ***

## lstat -4.3534 0.3844 -11.325 < 2e-16 ***

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## Residual standard error: 4.895 on 397 degrees of freedom

## Multiple R-squared: 0.7212, Adjusted R-squared: 0.7149

## F-statistic: 114.1 on 9 and 397 DF, p-value: < 2.2e-166.3.19 K-fold cross validation

Cross-validation provides a more direct estimate of predictive performance. It is especially important after model selection or when comparing multiple model families.

Here we use 10-fold CV via DAAG::cv.glm().

## Warning: package 'DAAG' was built under R version 4.4.3set.seed(123)

model_trasf_term_cv <- glm( medv ~ zn + chas + nox + (rm* lstat) + dis + rad + tax +

ptratio , data = data2)

cv.err <- cv.glm(data2, model_trasf_term_cv, K = 10)$delta

cv.err ## [1] 19.24588 19.15400Interpretation:

- The delta output typically includes raw and adjusted CV error estimates.

- Compare CV errors across competing models; smaller values indicate better predictive performance.

6.3.20 Nonnest models comparisons

Once you have multiple candidate models (original scale, transformed, polynomial, interaction), compare them side-by-side.

AIC is one way; CV error is another. In applied practice, you often consider both: - AIC for model parsimony, - CV for predictive robustness.

| df | AIC | |

|---|---|---|

| model_trasf_term | 12 | 2345.492 |

| model_trasf | 11 | 2432.353 |

| model_trasf_orig | 11 | 2459.780 |

| model_trasf_poly | 11 | 2592.584 |

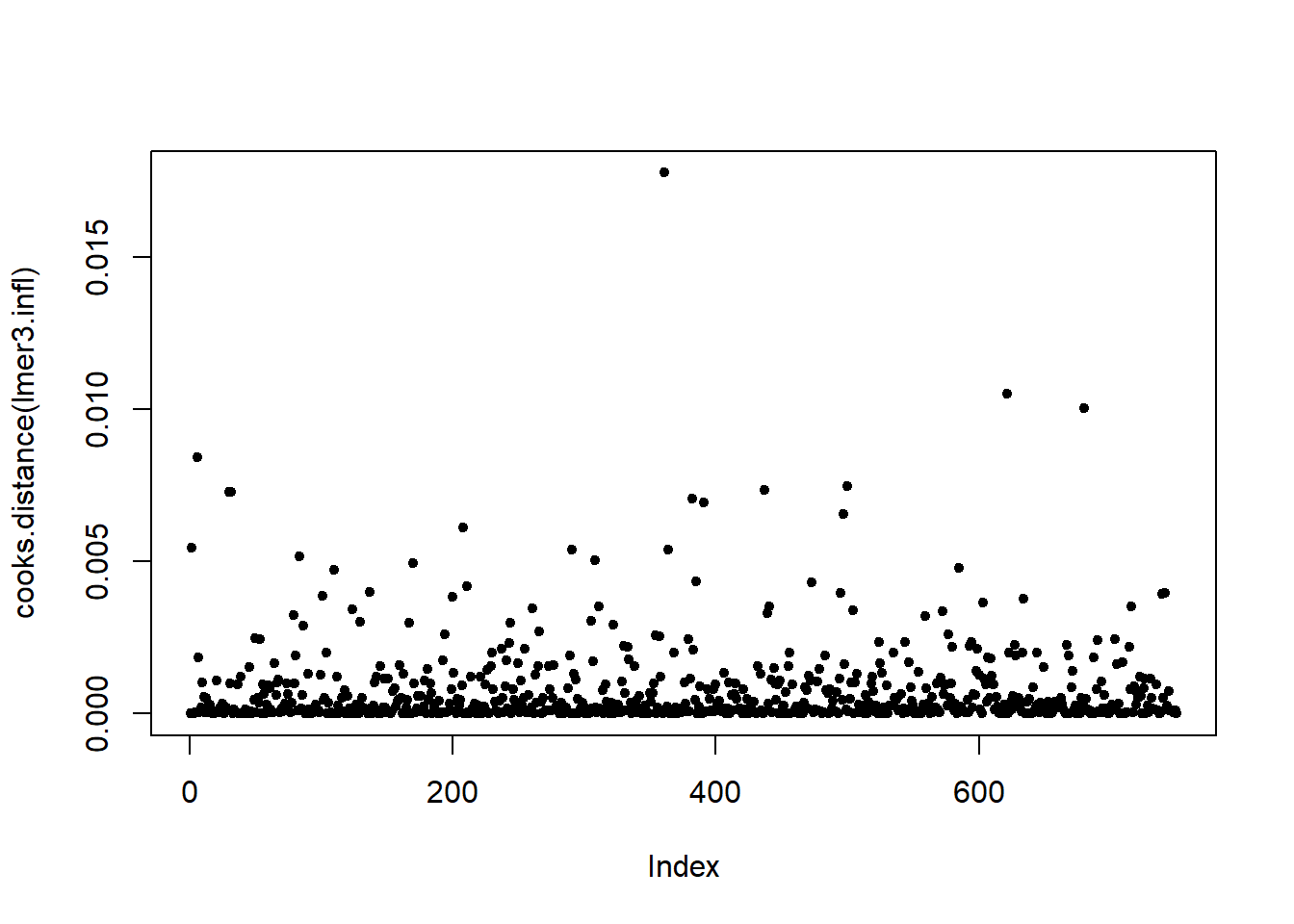

# interaction, transformation, original, polynomial by order (`data` has been normalized but not `data2`)6.3.21 Posterior predictive / diagnostic checks

Even after selecting a “best” model, the most important step is to verify assumptions and identify influential observations.

performance::check_model() provides a comprehensive set of diagnostics in one call:

- linearity,

- homoscedasticity,

- influential points,

- collinearity,

- normality of residuals.

## Warning: package 'performance' was built under R version 4.4.3

In practice, if diagnostics are poor, consider: - transformations, - adding nonlinear terms, - robust SEs, - or moving to a more appropriate model class.

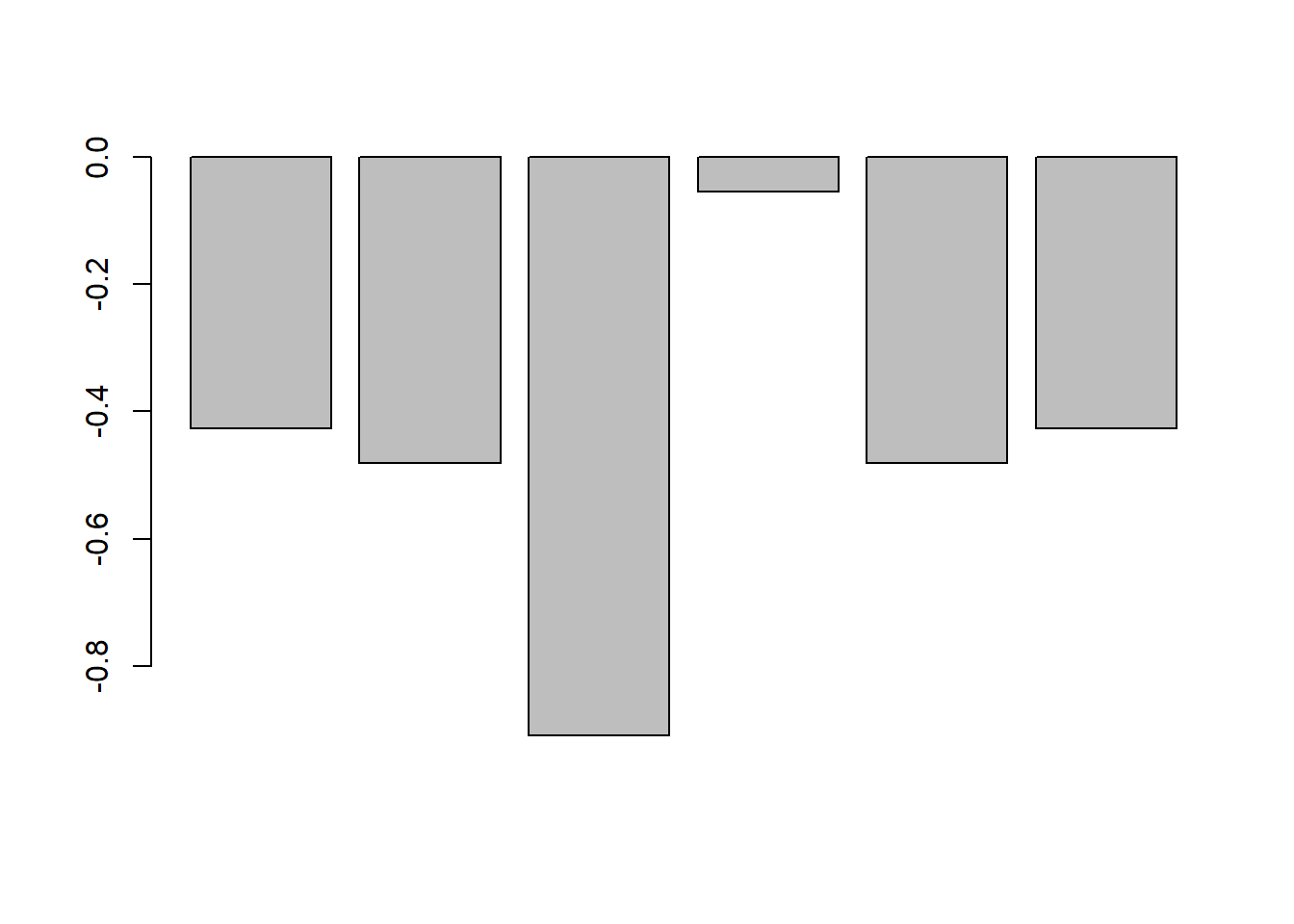

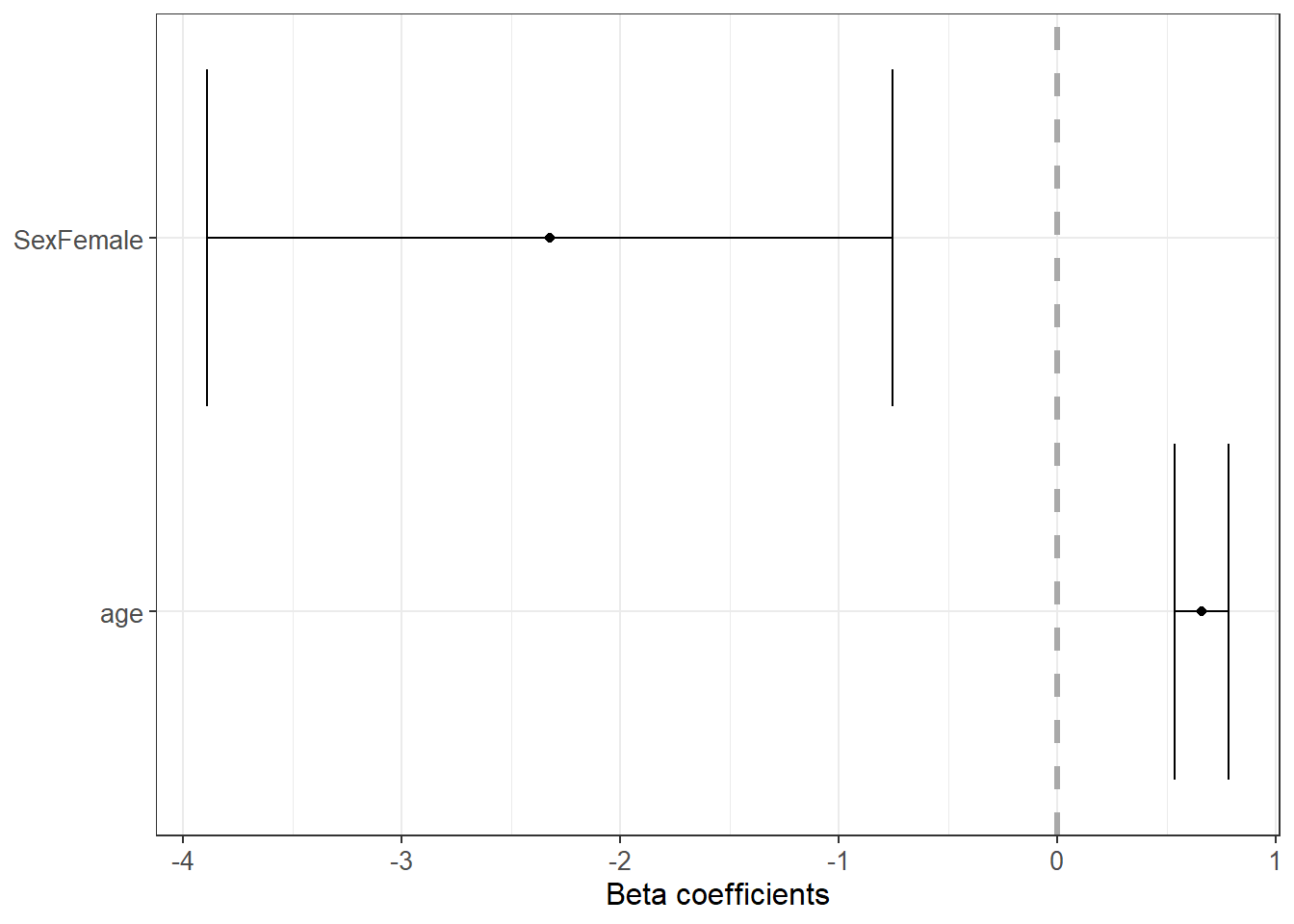

6.3.22 Forest plot for coefficients

Coefficient plots help communicate results clearly. They emphasize effect size and uncertainty, not only p-values.

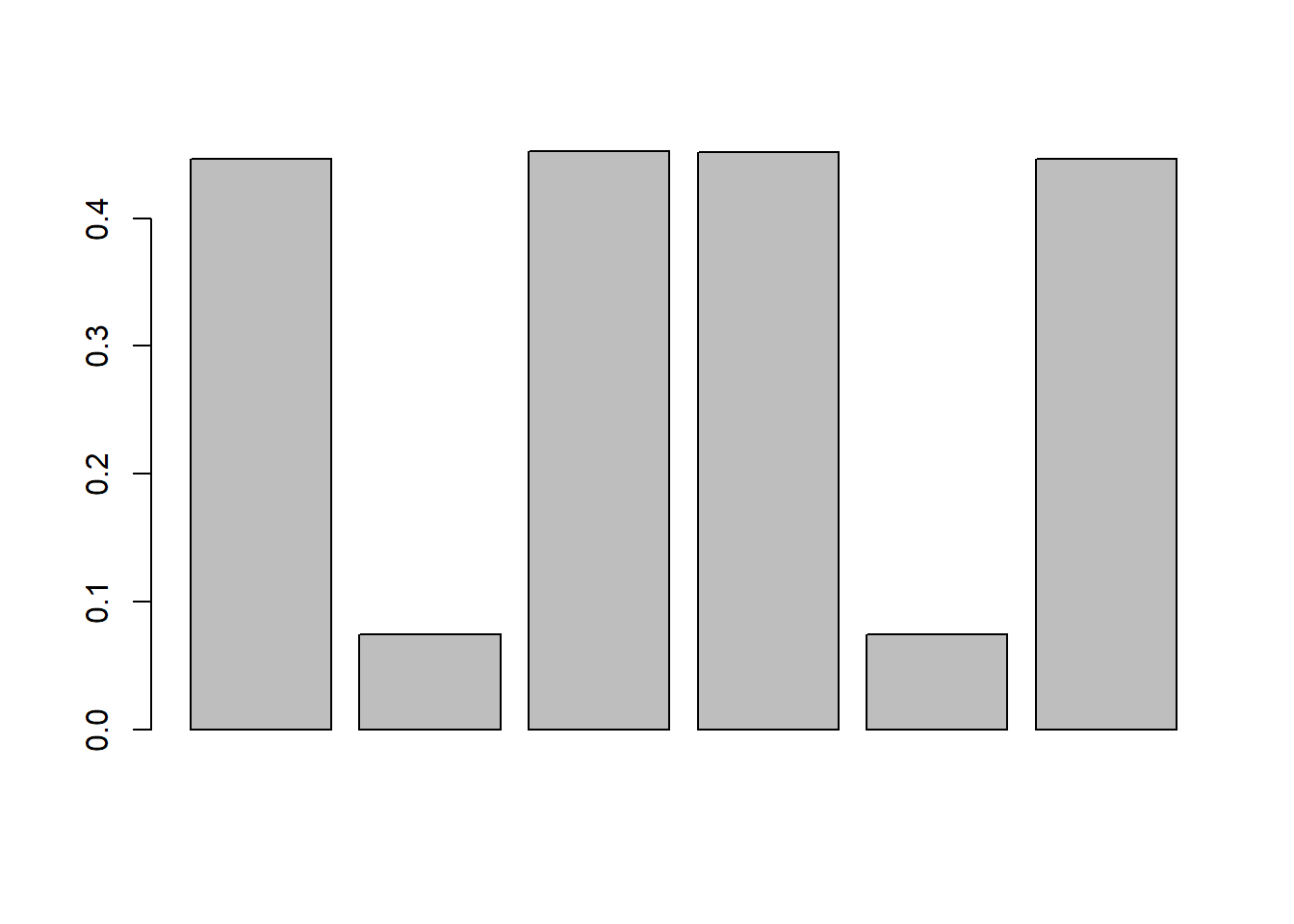

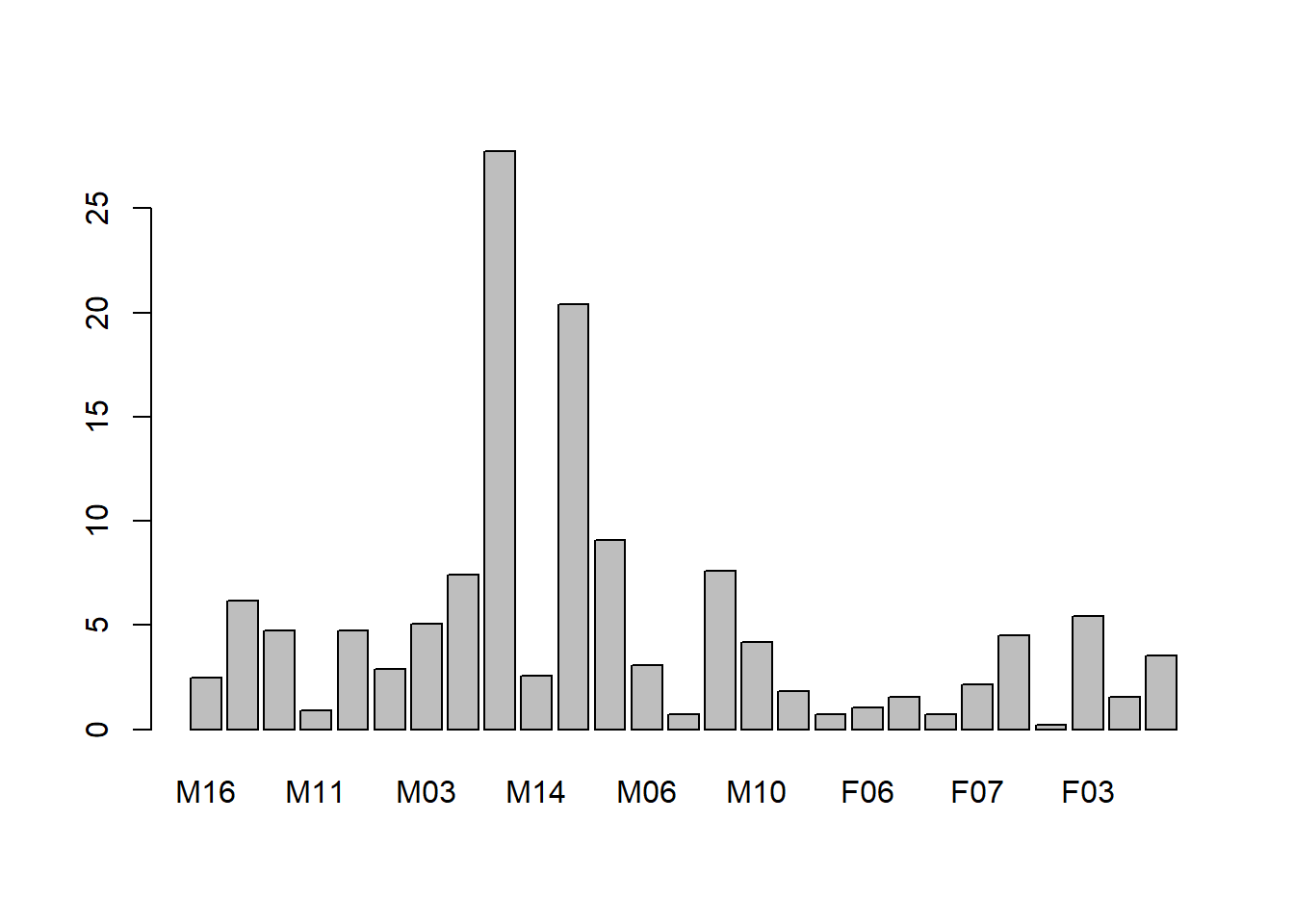

6.3.23 Relative Importance

When predictors are correlated, raw coefficients are not always a good measure of “importance.” Relative importance methods attempt to quantify each predictor’s contribution to explained variance.

Here, relaimpo is used with bootstrap resampling to assess stability.

## Warning: package 'relaimpo' was built under R version 4.4.3## Warning: package 'survey' was built under R version 4.4.3## Warning: package 'mitools' was built under R version 4.4.3# calc.relimp(fit,type=c("lmg","last","first","pratt"),

# rela=TRUE)

# Bootstrap Measures of Relative Importance (1000 samples)

boot <- boot.relimp(model_trasf_term, b = 10, type =c("lmg" ), rank = TRUE,

# type =c("lmg","last","first","pratt")

diff = TRUE, rela = TRUE)

booteval.relimp(boot) # print result## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints## Response variable: medv

## Total response variance: 77.88147

## Analysis based on 407 observations

##

## 10 Regressors:

## zn chas nox rm lstat dis rad tax ptratio rm:lstat

## Proportion of variance explained by model: 77.39%

## Metrics are normalized to sum to 100% (rela=TRUE).

##

## Relative importance metrics:

##

## lmg

## zn 0.03220843

## chas 0.01925573

## nox 0.05753222

## rm 0.24841214

## lstat 0.32582422

## dis 0.04647715

## rad 0.03216196

## tax 0.05940953

## ptratio 0.09003437

## rm:lstat 0.08868423

##

## Average coefficients for different model sizes:

##

## 1X 2Xs 3Xs 4Xs 5Xs 6Xs

## zn 3.1505046 2.1309586 1.5419108 1.1885764 0.9622432 0.8066737

## chas 1.4317561 1.3828980 1.2922217 1.1827563 1.0710746 0.9666952

## nox -3.6683140 -2.8590532 -2.3987148 -2.1635246 -2.0628637 -2.0336275

## rm 5.8715576 5.1812470 4.6686609 4.2214027 3.7902531 3.3584729

## lstat -6.3961998 -6.2413211 -6.1543204 -6.0962610 -6.0505503 -6.0111059

## dis 2.3495853 0.6106749 -0.5986185 -1.4554886 -2.0655023 -2.4944977

## rad -3.2090101 -1.5469225 -0.4505596 0.2850626 0.7884232 1.1408255

## tax -4.1727995 -3.6061521 -3.1776467 -2.8413999 -2.5683300 -2.3434564

## ptratio -4.2048171 -3.4233285 -2.9476391 -2.6199136 -2.3633992 -2.1469790

## rm:lstat -0.7386853 -1.0072327 -1.1975350 -1.3430505 -1.4610987 -1.5602846

## 7Xs 8Xs 9Xs 10Xs

## zn 0.6942682 0.6122971 0.5558327 0.5221292

## chas 0.8734919 0.7916003 0.7191770 0.6537613

## nox -2.0351844 -2.0434312 -2.0444974 -2.0294702

## rm 2.9235837 2.4886854 2.0599352 1.6458985

## lstat -5.9756419 -5.9424093 -5.9088868 -5.8714971

## dis -2.7882468 -2.9825750 -3.1064663 -3.1809633

## rad 1.3969994 1.5963587 1.7666711 1.9250667

## tax -2.1625306 -2.0281734 -1.9455332 -1.9186629

## ptratio -1.9610124 -1.8027959 -1.6694147 -1.5553973

## rm:lstat -1.6447082 -1.7160362 -1.7745576 -1.8202278

##

##

## Confidence interval information ( 10 bootstrap replicates, bty= perc ):

## Relative Contributions with confidence intervals:

##

## Lower Upper

## percentage 0.95 0.95 0.95

## zn.lmg 0.0322 _______HIJ 0.0204 0.0357

## chas.lmg 0.0193 ______GHIJ 0.0016 0.0501

## nox.lmg 0.0575 ____EFG___ 0.0430 0.0708

## rm.lmg 0.2484 AB________ 0.1916 0.3284

## lstat.lmg 0.3258 AB________ 0.2617 0.3836

## dis.lmg 0.0465 _____FGHIJ 0.0286 0.0548

## rad.lmg 0.0322 ______GHIJ 0.0215 0.0465

## tax.lmg 0.0594 ___DEF____ 0.0479 0.0796

## ptratio.lmg 0.0900 __CD______ 0.0711 0.1202

## rm:lstat.lmg 0.0887 __CDE_____ 0.0606 0.1037

##

## Letters indicate the ranks covered by bootstrap CIs.

## (Rank bootstrap confidence intervals always obtained by percentile method)

## CAUTION: Bootstrap confidence intervals can be somewhat liberal.

##

##

## Differences between Relative Contributions:

##

## Lower Upper

## difference 0.95 0.95 0.95

## zn-chas.lmg 0.0130 -0.0192 0.0276

## zn-nox.lmg -0.0253 * -0.0504 -0.0137

## zn-rm.lmg -0.2162 * -0.3028 -0.1559

## zn-lstat.lmg -0.2936 * -0.3478 -0.2274

## zn-dis.lmg -0.0143 -0.0235 0.0019

## zn-rad.lmg 0.0000 -0.0221 0.0084

## zn-tax.lmg -0.0272 * -0.0532 -0.0166

## zn-ptratio.lmg -0.0578 * -0.0911 -0.0377

## zn-rm:lstat.lmg -0.0565 * -0.0746 -0.0305

## chas-nox.lmg -0.0383 * -0.0584 -0.0033

## chas-rm.lmg -0.2292 * -0.3144 -0.1753

## chas-lstat.lmg -0.3066 * -0.3672 -0.2117

## chas-dis.lmg -0.0272 -0.0385 0.0137

## chas-rad.lmg -0.0129 -0.0449 0.0201

## chas-tax.lmg -0.0402 * -0.0781 -0.0008

## chas-ptratio.lmg -0.0708 * -0.1187 -0.0260

## chas-rm:lstat.lmg -0.0694 * -0.1021 -0.0338

## nox-rm.lmg -0.1909 * -0.2781 -0.1378

## nox-lstat.lmg -0.2683 * -0.3298 -0.2047

## nox-dis.lmg 0.0111 -0.0010 0.0275

## nox-rad.lmg 0.0254 * 0.0048 0.0288

## nox-tax.lmg -0.0019 -0.0283 0.0061

## nox-ptratio.lmg -0.0325 * -0.0689 -0.0130

## nox-rm:lstat.lmg -0.0312 * -0.0525 -0.0008

## rm-lstat.lmg -0.0774 -0.1920 0.0488

## rm-dis.lmg 0.2019 * 0.1368 0.2922

## rm-rad.lmg 0.2163 * 0.1599 0.3069

## rm-tax.lmg 0.1890 * 0.1310 0.2806

## rm-ptratio.lmg 0.1584 * 0.1065 0.2525

## rm-rm:lstat.lmg 0.1597 * 0.1047 0.2605

## lstat-dis.lmg 0.2793 * 0.2253 0.3288

## lstat-rad.lmg 0.2937 * 0.2231 0.3519

## lstat-tax.lmg 0.2664 * 0.1899 0.3229

## lstat-ptratio.lmg 0.2358 * 0.1493 0.2985

## lstat-rm:lstat.lmg 0.2371 * 0.1658 0.3018

## dis-rad.lmg 0.0143 -0.0088 0.0231

## dis-tax.lmg -0.0129 * -0.0419 -0.0043

## dis-ptratio.lmg -0.0436 * -0.0825 -0.0303

## dis-rm:lstat.lmg -0.0422 * -0.0660 -0.0071

## rad-tax.lmg -0.0272 * -0.0331 -0.0205

## rad-ptratio.lmg -0.0579 * -0.0737 -0.0367

## rad-rm:lstat.lmg -0.0565 * -0.0714 -0.0240

## tax-ptratio.lmg -0.0306 * -0.0462 -0.0123

## tax-rm:lstat.lmg -0.0293 -0.0453 0.0050

## ptratio-rm:lstat.lmg 0.0014 -0.0253 0.0346

##

## * indicates that CI for difference does not include 0.

## CAUTION: Bootstrap confidence intervals can be somewhat liberal.## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

## Warning in norm.inter(t, alpha): extreme order statistics used as endpoints

Practical note: bootstrap sample size b should be much larger (e.g., 500–2000) for stable inference; here it is small for demonstration.

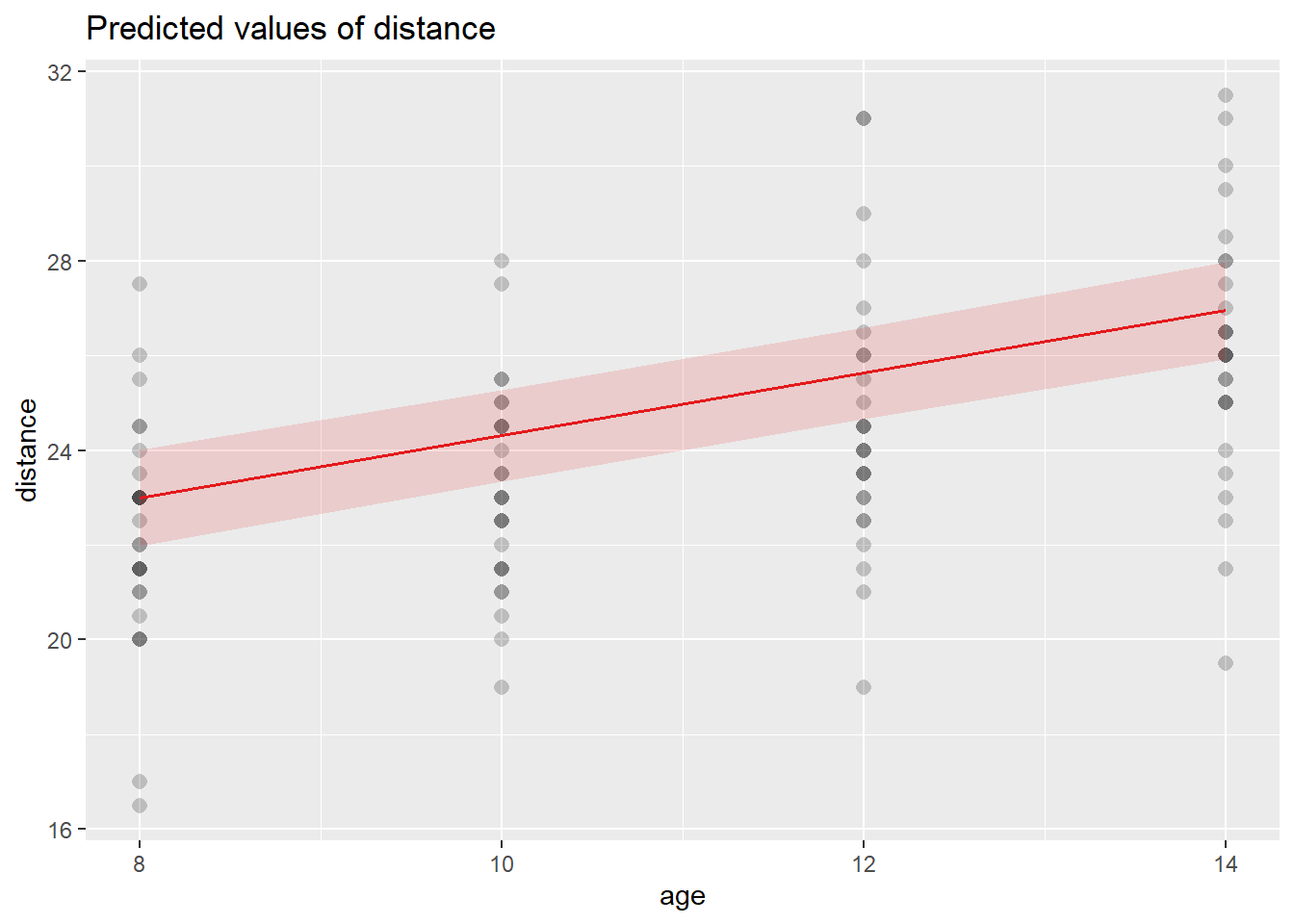

6.3.24 Model prediction

Prediction is where the model becomes operational. We generate:

- prediction intervals (interval="predict") for individual outcomes,

- confidence intervals (interval="confidence") for mean responses.

First, create a predictor dataset.

library(dplyr)

data_pred <- dplyr::select(data2 , zn , chas , nox , rm , dis , rad , tax ,

ptratio , lstat)

data_pred[1:10,]| zn | chas | nox | rm | dis | rad | tax | ptratio | lstat | |

|---|---|---|---|---|---|---|---|---|---|

| 1 | 0.2845483 | -0.2723291 | -0.1440749 | 0.4132629 | 0.2785465 | -0.9818712 | -0.6659492 | -1.4575580 | -1.0744990 |

| 2 | -0.4872402 | -0.2723291 | -0.7395304 | 0.1940824 | 0.6786919 | -0.8670245 | -0.9863534 | -0.3027945 | -0.4919525 |

| 4 | -0.4872402 | -0.2723291 | -0.8344581 | 1.0152978 | 1.1314532 | -0.7521778 | -1.1050216 | 0.1129203 | -1.3601708 |

| 5 | -0.4872402 | -0.2723291 | -0.8344581 | 1.2273620 | 1.1314532 | -0.7521778 | -1.1050216 | 0.1129203 | -1.0254866 |

| 6 | -0.4872402 | -0.2723291 | -0.8344581 | 0.2068916 | 1.1314532 | -0.7521778 | -1.1050216 | 0.1129203 | -1.0422909 |

| 7 | 0.0487240 | -0.2723291 | -0.2648919 | -0.3880270 | 0.9295961 | -0.5224844 | -0.5769480 | -1.5037485 | -0.0312367 |

| 8 | 0.0487240 | -0.2723291 | -0.2648919 | -0.1603069 | 1.0872565 | -0.5224844 | -0.5769480 | -1.5037485 | 0.9097999 |

| 9 | 0.0487240 | -0.2723291 | -0.2648919 | -0.9302853 | 1.1392843 | -0.5224844 | -0.5769480 | -1.5037485 | 2.4193794 |

| 10 | 0.0487240 | -0.2723291 | -0.2648919 | -0.3994130 | 1.3357787 | -0.5224844 | -0.5769480 | -1.5037485 | 0.6227277 |

| 11 | 0.0487240 | -0.2723291 | -0.2648919 | 0.1314594 | 1.2422167 | -0.5224844 | -0.5769480 | -1.5037485 | 1.0918456 |

6.3.24.1 Prediction interval (individual prediction)

Prediction intervals are wider because they include residual variability.

| fit | lwr | upr | |

|---|---|---|---|

| 1 | 30.258620 | 21.8534712 | 38.66377 |

| 2 | 24.415330 | 16.0263022 | 32.80436 |

| 4 | 31.759215 | 23.3073401 | 40.21109 |

| 5 | 29.920476 | 21.4789966 | 38.36196 |

| 6 | 26.441050 | 18.0254500 | 34.85665 |

| 7 | 20.820462 | 12.3963513 | 29.24457 |

| 8 | 15.455998 | 6.9807773 | 23.93122 |

| 9 | 8.991037 | 0.4048231 | 17.57725 |

| 10 | 16.144719 | 7.6745761 | 24.61486 |

| 11 | 13.847673 | 5.3305253 | 22.36482 |

6.3.24.2 Confidence interval (mean prediction)

Confidence intervals for the mean response are narrower than prediction intervals.

| fit | lwr | upr | |

|---|---|---|---|

| 1 | 30.258620 | 29.329961 | 31.18728 |

| 2 | 24.415330 | 23.646134 | 25.18452 |

| 4 | 31.759215 | 30.474666 | 33.04376 |

| 5 | 29.920476 | 28.706202 | 31.13475 |